From Sequence to Phenotype: A Comprehensive Guide to Machine Learning in Plant Multi-Omics Data Analysis

This article provides a systematic guide for researchers and industry professionals on applying machine learning (ML) to integrate and interpret complex plant multi-omics data.

From Sequence to Phenotype: A Comprehensive Guide to Machine Learning in Plant Multi-Omics Data Analysis

Abstract

This article provides a systematic guide for researchers and industry professionals on applying machine learning (ML) to integrate and interpret complex plant multi-omics data. It covers foundational principles, practical methodologies for predicting traits and gene functions, strategies for troubleshooting common computational challenges, and frameworks for robust model validation. By synthesizing current approaches from genomics, transcriptomics, proteomics, and metabolomics, the article equips scientists with the tools to uncover novel biological insights, accelerate crop improvement, and advance plant-based drug discovery.

Demystifying Plant Multi-Omics: A Primer on Data Layers and ML Readiness

Application Notes on Multi-Omics in Plant Sciences

Multi-omics approaches provide an integrative framework for understanding the complex molecular mechanisms underlying plant phenotypes. In the context of machine learning for plant multi-omics data analysis, these layers offer complementary data types that, when fused, can predict traits, decipher stress responses, and accelerate breeding programs.

Table 1: Core Characteristics of Plant Omics Technologies

| Omics Layer | Measured Molecule | Key Technologies (2023-2024) | Approx. Coverage/Throughput (Model Plant) | Primary Data Output | Key Challenge for ML Integration |

|---|---|---|---|---|---|

| Genomics | DNA | Whole Genome Sequencing (PacBio HiFi, ONT), Genotyping-by-Sequencing (GBS) | 1-100x genome coverage; 100-10k samples/study | Variants (SNPs, Indels), Structural Variants | High-dimensional, sparse data |

| Transcriptomics | RNA (mRNA, ncRNA) | RNA-Seq (Illumina), Single-Cell RNA-Seq, Iso-Seq | 20-50 million reads/sample; 10-100k genes detected | Gene/isoform expression counts (FPKM, TPM) | Batch effects, normalization |

| Proteomics | Proteins & Peptides | LC-MS/MS (Tandem Mass Spectrometry), DIA, TMT Labeling | Identifies 5,000-15,000 proteins/plant tissue sample | Protein abundance, PTM identification | Dynamic range, missing values |

| Metabolomics | Small Molecules (<1500 Da) | GC-MS, LC-MS, NMR | Detects 100s (targeted) to 1000s (untargeted) of metabolites | Peak intensities, metabolite concentrations | Compound annotation, noise |

Table 2: Recent Multi-Omics Studies in Plants (2022-2024)

| Study Focus (Plant) | Omics Layers Integrated | ML/AI Method Used | Primary Objective | Key Outcome |

|---|---|---|---|---|

| Drought Resilience (Maize) | Genomics, Transcriptomics, Metabolomics | Random Forest, Graph Neural Networks | Predict biomass under drought | Achieved 89% prediction accuracy of tolerant lines |

| Nutrient Use Efficiency (Rice) | Genomics, Proteomics, Metabolomics | Bayesian Networks, XGBoost | Identify markers for nitrogen uptake | Discovered 3 key protein-metabolite modules |

| Disease Resistance (Tomato) | Transcriptomics, Proteomics | Deep Learning (Autoencoders), SVM | Classify resistant vs. susceptible phenotypes | Model identified 20 candidate resistance biomarkers |

Detailed Experimental Protocols

Protocol: Integrated RNA-Seq and LC-MS/MS for Abiotic Stress Response

Title: Simultaneous Transcriptome and Proteome Profiling from the Same Plant Tissue Sample.

Objective: To correlate gene expression changes with protein abundance changes in Arabidopsis thaliana leaves under salt stress for ML-based network inference.

Materials:

- Arabidopsis thaliana (Col-0) plants, 4-week-old.

- Liquid Nitrogen.

- TRIzol Reagent.

- RIPA Lysis Buffer with protease/phosphatase inhibitors.

- DNase I (RNase-free).

- MS-grade Trypsin.

- C18 Solid-Phase Extraction columns.

- Illumina Stranded mRNA Prep kit.

- High-pH reverse-phase peptide fractionation kit.

Procedure:

Part A: Concurrent Biomolecule Extraction (Modified TRIzol Method)

- Sample Harvest: Flash-freeze leaf tissue (100 mg) in liquid N₂. Grind to fine powder.

- Phase Separation: Add 1 mL TRIzol to powder, vortex, incubate 5 min (RT). Add 0.2 mL chloroform, shake vigorously, incubate 3 min.

- Centrifuge: 12,000 × g, 15 min, 4°C. Three phases form.

- RNA Recovery (Upper Aqueous Phase): Transfer upper aqueous phase to new tube. Precipitate RNA with 0.5 mL isopropanol. Wash pellet with 75% ethanol. Resuspend in RNase-free water. Treat with DNase I. Assess integrity (RIN > 8.0).

- Protein Recovery (Interphase/Phenol Phase): Remove remaining aqueous phase. Add 0.3 mL 100% ethanol to interphase/phenol phase, vortex, incubate 3 min (RT).

- Centrifuge: 2,000 × g, 5 min, 4°C. Discard supernatant (phenol-ethanol).

- Protein Precipitation: Wash protein pellet (from interphase) thrice with 1 mL of 0.3 M guanidine HCl in 95% ethanol. Vortex and incubate 20 min per wash (RT). Final wash with 100% ethanol.

- Protein Solubilization: Air-dry pellet 10 min. Solubilize in 200 µL RIPA buffer with sonication (3 pulses of 10 sec). Centrifuge at 12,000 × g, 10 min, 4°C. Transfer supernatant (total protein) to new tube. Quantify by BCA assay.

Part B: Downstream Analysis

- Transcriptomics: Construct libraries from 1 µg total RNA using Illumina Stranded mRNA Prep. Sequence on NovaSeq 6000 (2x150 bp). Align reads to TAIR10 genome using HISAT2. Quantify expression with StringTie.

- Proteomics: Digest 50 µg protein with trypsin (1:50 w/w, 37°C, overnight). Desalt peptides using C18 columns. Fractionate using high-pH reverse-phase chromatography (8 fractions). Analyze each fraction by LC-MS/MS on a Q Exactive HF in data-dependent acquisition (DDA) mode. Search spectra against Araport11 database using MaxQuant.

Part C: Data Integration for ML

- Data Matrices: Create a gene expression matrix (genes × samples, TPM values) and a protein abundance matrix (proteins × samples, LFQ intensities).

- Common Identifier Mapping: Map proteins to their corresponding encoding genes using Araport11 annotation.

- Concatenated Feature Matrix: For shared gene-protein pairs, create a combined matrix where each sample is represented by both transcript and protein abundance features.

- ML Input: Use this matrix as input for supervised (e.g., Elastic Net regression to predict physiological salt stress score) or unsupervised (e.g., Multi-Omics Factor Analysis) learning.

Protocol: GC-MS Based Metabolomics for Plant Phenotyping

Title: Untargeted Metabolite Profiling for Genotype Discrimination.

Objective: To generate metabolomic fingerprints from root exudates of different wheat cultivars for classification using ML models.

Materials:

- Hydroponically grown wheat seedlings (14-day-old).

- Methanol (HPLC grade).

- Methoxyamine hydrochloride.

- N-Methyl-N-(trimethylsilyl)trifluoroacetamide (MSTFA).

- Retention index markers (alkane series, C8-C40).

- GC-MS system with electron ionization (EI).

Procedure:

- Exudate Collection: Rinse roots in sterile water, immerse in 10 mL collection solution for 4h. Lyophilize the solution.

- Metabolite Extraction: Resuspend lyophilized exudate in 1 mL 80% methanol. Sonicate 15 min, centrifuge at 14,000 × g, 20 min, 4°C. Transfer supernatant, dry under N₂ stream.

- Derivatization: Add 50 µL methoxyamine (20 mg/mL in pyridine), incubate 90 min, 37°C, shaking. Add 80 µL MSTFA, incubate 30 min, 37°C.

- GC-MS Analysis: Inject 1 µL in splitless mode. Use DB-5MS column. Oven program: 70°C (5 min), ramp 5°C/min to 310°C, hold 10 min. EI at 70 eV, scan range m/z 50-600.

- Data Processing: Use AMDIS or MS-DIAL for peak deconvolution, alignment, and peak table generation. Annotate peaks using NIST library and retention index matching.

- ML Pipeline: Normalize peak table (probabilistic quotient normalization). Use table (metabolites × samples) as input for a Random Forest classifier (crop yield category) or a PCA for outlier detection.

Visualizations

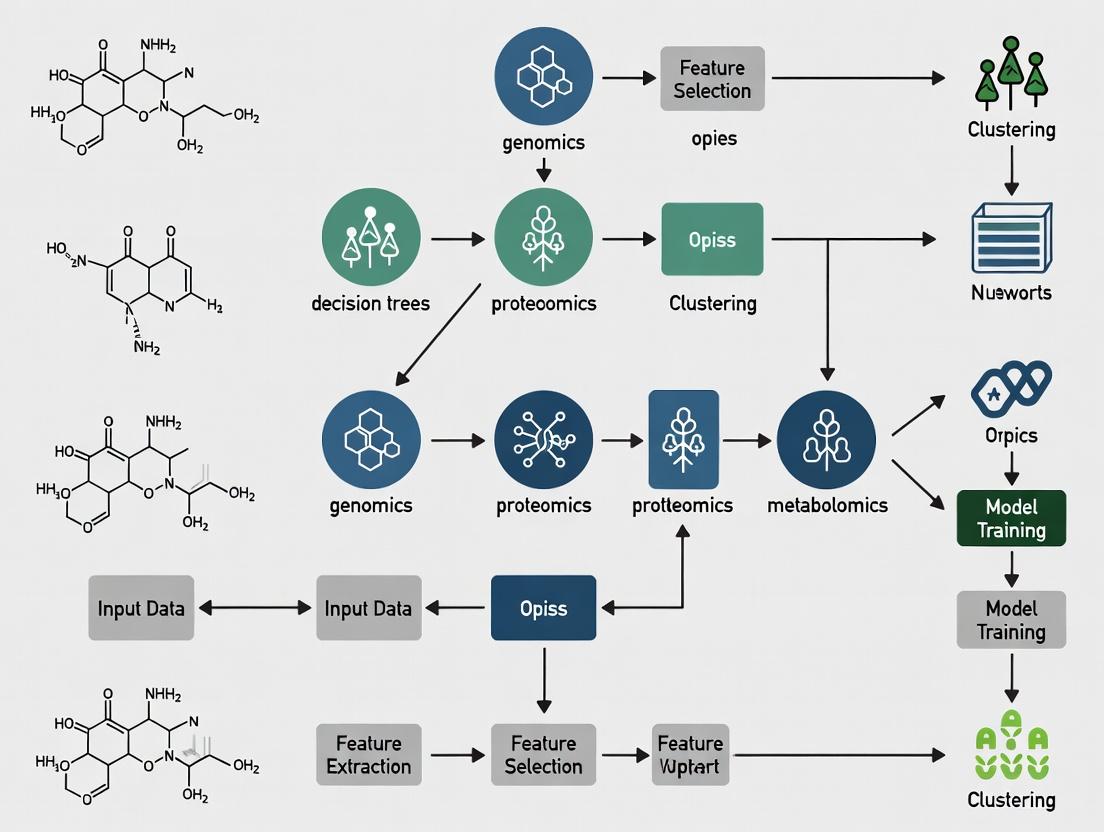

Title: The Central Dogma and Multi-Omics Integration for ML.

Title: Concurrent RNA & Protein Extraction Workflow for ML.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents for Plant Multi-Omics Experiments

| Reagent / Kit Name | Vendor (Example) | Function in Multi-Omics Workflow | Key Consideration for ML-Ready Data |

|---|---|---|---|

| TRIzol Reagent | Thermo Fisher | Simultaneous extraction of RNA, DNA, and protein from a single sample. | Minimizes batch variation between omics layers from the same biological source. |

| RNeasy Plant Mini Kit | Qiagen | High-quality total RNA purification, includes DNase treatment. | Ensures high RIN values for reliable transcriptomics data, reducing technical noise. |

| DNeasy Plant Pro Kit | Qiagen | Genomic DNA isolation for sequencing or genotyping. | Provides high-molecular-weight DNA for long-read sequencing, improving variant calling. |

| iST (in-StageTip) Kit | PreOmics | All-in-one protein extraction, digestion, and cleanup for MS. | Standardizes proteomics sample prep, reducing missing values in the final dataset. |

| AMPure XP Beads | Beckman Coulter | Size selection and cleanup of NGS libraries. | Critical for obtaining uniform sequencing library sizes, impacting read alignment metrics. |

| TMTpro 16plex | Thermo Fisher | Isobaric labeling for multiplexed quantitative proteomics. | Allows 16-sample multiplexing, enabling large cohort studies with reduced run-to-run variance. |

| MS-grade Trypsin | Promega | Specific digestion of proteins into peptides for LC-MS/MS. | Digestion efficiency affects protein coverage and quantification accuracy. |

| NIST SRM 1950 | NIST | Standard Reference Material for metabolomics method validation. | Provides a benchmark for inter-laboratory data normalization, crucial for meta-analysis. |

Integrating multi-omics data (genomics, transcriptomics, proteomics, metabolomics) is critical for understanding plant systems biology. However, this integration presents three primary challenges that complicate machine learning (ML) model development: (1) High Dimensionality (features >> samples), leading to the "curse of dimensionality"; (2) Multi-Source Noise from technical variation and biological stochasticity; and (3) Immense Biological Complexity from nonlinear interactions across temporal, spatial, and environmental scales. This application note provides protocols and frameworks to address these challenges within an ML-driven research thesis.

Application Notes & Quantitative Data Summaries

Table 1: Characteristic Scale and Dimensionality of Plant Omics Modalities

| Omics Layer | Typical Measurement Scale | Approx. Features in Model Plants (e.g., Arabidopsis, Maize) | Primary Source of Noise |

|---|---|---|---|

| Genomics | DNA sequence / variation | ~25,000 - 60,000 genes + regulatory regions | Sequencing errors, alignment artifacts |

| Transcriptomics | RNA abundance | ~20,000 - 50,000 transcripts | Batch effects, low-abundance transcripts |

| Proteomics | Protein abundance/PTMs | >10,000 - 30,000 protein groups | Ion suppression, dynamic range limits |

| Metabolomics | Metabolite abundance | 1,000 - 10,000+ metabolic features | Ionization efficiency, matrix effects |

Table 2: Common ML Models and Their Application to Multi-Omics Challenges

| Challenge | ML Approach | Key Function | Example Tool/Package |

|---|---|---|---|

| Dimensionality Reduction | Autoencoders, t-SNE, UMAP | Non-linear feature compression, visualization | SCANPY, Seurat |

| Feature Selection | LASSO, Random Forest, MCFS | Identify key biomarkers across omics | scikit-learn, Boruta |

| Data Integration | Multi-Kernel Learning, DIABLO | Fuse disparate omics data types into a model | mixOmics, MOFA2 |

| Noise Robustness | Variational Autoencoders (VAEs), Robust PCA | Denoise and impute missing values | scVI, DrImpute |

| Modeling Complexity | Graph Neural Networks (GNNs), MLPs | Model pathway and interaction networks | PyTorch Geometric, Keras |

Detailed Experimental Protocols

Protocol 1: An Integrated Workflow for Multi-Omics Data Preprocessing and Dimensionality Reduction Objective: To generate a clean, integrated, and lower-dimensional feature set from raw multi-omics data for downstream ML analysis. Materials: See "The Scientist's Toolkit" below. Procedure:

- Data Acquisition & Alignment: For each omics dataset (e.g., RNA-seq counts, LC-MS/MS peak areas), ensure samples are consistently labeled. Map features to a common reference (e.g., Gene ID for transcripts/proteins; KEGG or BinBase ID for metabolites).

- Normalization & Batch Correction:

- Transcriptomics: Apply TPM or DESeq2's median-of-ratios normalization. Correct for batch effects using ComBat (from

svapackage) or scVI for more complex designs. - Metabolomics/Proteomics: Perform median or quantile normalization. Use QC-sample-based correction (e.g., SERRF) or linear models to remove systematic drift.

- Transcriptomics: Apply TPM or DESeq2's median-of-ratios normalization. Correct for batch effects using ComBat (from

- Missing Value Imputation: For proteomics/metabolomics, use k-Nearest Neighbors (KNN) imputation or MissForest (random forest-based) with a limit of ~20-30% missingness.

- Feature Filtering: Remove low-variance features (e.g., bottom 20%) and features with excessive missing values.

- Multi-Omics Integration & Reduction: Apply a multi-view dimensionality reduction technique.

- Using MOFA2 (Multi-Omics Factor Analysis):

a. Create a

MultiAssayExperimentobject with filtered matrices. b. Train the MOFA model:MOFAobject <- create_mofa(data)followed byMOFAobject <- prepare_mofa(MOFAobject, ...)andMOFAobject <- run_mofa(MOFAobject). c. Extract the lower-dimensional factors (latent variables) that capture shared variance across omics layers. These factors become the input for predictive ML models.

- Using MOFA2 (Multi-Omics Factor Analysis):

a. Create a

Protocol 2: Building a Robust ML Classifier for Stress Phenotype Prediction Objective: To develop a classifier that predicts a plant's stress response (e.g., drought-tolerant vs. sensitive) from integrated multi-omics data, addressing noise and complexity. Materials: Processed multi-omics factors from Protocol 1, phenotype labels, scikit-learn/pyTorch environment. Procedure:

- Dataset Partitioning: Split data into Training (70%), Validation (15%), and Test (15%) sets. Ensure stratification by phenotype label.

- Model Architecture (Example: Multi-Layer Perceptron - MLP): Design an MLP with:

- Input Layer: Nodes = number of latent factors from MOFA2 (e.g., 15).

- Hidden Layers: 2-3 dense layers with ReLU activation and Dropout (rate=0.3-0.5) for regularization against noise.

- Output Layer: Softmax activation for classification.

- Training with Regularization: Use Adam optimizer, cross-entropy loss. Implement early stopping monitored on validation loss (patience=20 epochs). Use L2 weight decay (1e-4) to prevent overfitting to high-dimensional noise.

- Interpretation with SHAP: Apply SHAP (SHapley Additive exPlanations) to the trained model using the

shaplibrary (KernelExplainerorDeepExplainer) to identify which latent factors (and by extension, which original omics features) drive predictions.

Visualization of Workflows and Relationships

Title: Multi-Omics Data Preprocessing and Integration Workflow

Title: ML Model Training and Interpretation Pipeline

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools and Reagents for Plant Multi-Omics Experiments

| Item / Solution | Function in Multi-Omics Pipeline | Example Product / Kit |

|---|---|---|

| mRNA Sequencing Kit | High-throughput transcriptome profiling from limited plant tissue. | Illumina Stranded mRNA Prep, NEBNext Ultra II |

| Protein Lysis Buffer | Efficient extraction of proteins from fibrous plant cell walls. | TRIzol-compatible buffers, Urea-Thiourea-CHAPS buffer |

| SPE Cartridges (C18, HILIC) | Clean-up and fractionation of metabolites/proteomes prior to LC-MS. | Waters Oasis, Phenomenex Strata |

| Indexed Adapters & Barcodes | Multiplexing samples for cost-effective sequencing. | Illumina Dual Index UD Sets, IDT for Illumina |

| Stable Isotope Standards | Absolute quantification and noise reduction in MS-based omics. | Cambridge Isotope Labs 13C/15N-labeled amino acids, Metabolomics standards |

| QC Reference Material | Pooled sample for monitoring technical noise and batch correction. | Custom-built pool from study tissues |

| Cell Wall Digesting Enzymes | For protoplasting in single-cell omics protocols. | Cellulase R10, Macerozyme R10 |

| Magnetic Bead Cleanup Kits | PCR purification and size selection for NGS libraries. | SPRIselect beads (Beckman Coulter) |

Within plant multi-omics research, the selection of an appropriate machine learning (ML) paradigm is foundational. Supervised and unsupervised learning serve distinct purposes in extracting biological insights from complex datasets, including genomics, transcriptomics, proteomics, and metabolomics. This application note delineates these core concepts, provides actionable protocols for their implementation, and contextualizes their utility in driving hypotheses and discoveries in plant biology and related drug development.

Core Concepts: Supervised vs. Unsupervised Learning

Supervised Learning involves training a model on a labeled dataset, where each input sample is associated with a known output. The model learns a mapping function to predict the output for new, unseen data. It is ideal for prediction and classification tasks.

Unsupervised Learning involves finding intrinsic patterns, structures, or groupings within an unlabeled dataset. There is no predefined output to predict. It is ideal for exploration, dimensionality reduction, and discovery of novel biological states.

Quantitative Comparison of Supervised vs. Unsupervised Learning

Table 1: Conceptual Comparison for Biological Data Analysis

| Feature | Supervised Learning | Unsupervised Learning |

|---|---|---|

| Primary Goal | Prediction of known labels/values | Discovery of hidden structures |

| Data Requirement | Labeled data (e.g., phenotype, treatment) | Unlabeled data |

| Common Tasks | Classification, Regression | Clustering, Dimensionality Reduction |

| Typical Algorithms | Random Forest, SVM, Neural Networks | k-means, Hierarchical Clustering, PCA, t-SNE, UMAP |

| Validation | Cross-validation against known labels | Internal metrics (silhouette, inertia) & biological validation |

| Application Example in Plant Omics | Predicting stress resistance from transcriptomes | Identifying novel cell types or metabolic pathways from single-cell data |

| Challenge | Requires high-quality, often scarce, labeled data | Interpretation of results can be subjective; requires domain expertise |

Table 2: Performance Metrics for Common Algorithms (Hypothetical Benchmark on Plant Transcriptomic Data)

| Algorithm Type | Example Algorithm | Typical Metric | Example Performance* |

|---|---|---|---|

| Supervised (Classification) | Random Forest | Accuracy / F1-Score | 92% Accuracy |

| Supervised (Regression) | Gradient Boosting | R² Score | 0.87 R² |

| Unsupervised (Clustering) | k-means | Silhouette Score | 0.65 Silhouette |

| Unsupervised (Dimensionality Reduction) | UMAP | N/A (Visualization) | Preserves 80% of local structure |

*Performance is dataset-dependent; values are illustrative.

Detailed Experimental Protocols

Protocol 1: Supervised Learning for Phenotype Prediction from RNA-Seq Data

Objective: To train a classifier that predicts a binary plant phenotype (e.g., drought susceptible vs. resistant) from gene expression data.

Materials & Input Data:

- RNA-Seq Dataset: Normalized count matrix (genes x samples).

- Phenotype Labels: Binary vector corresponding to each sample.

- Software: Python (scikit-learn, pandas) or R (caret, tidyverse).

Procedure:

- Data Preprocessing:

- Filter lowly expressed genes (e.g., keep genes with counts >10 in at least 20% of samples).

- Apply variance stabilizing transformation (e.g., log2(CPM+1)) or regularized log transformation.

- Split data into training (70%), validation (15%), and test (15%) sets, stratifying by phenotype.

Feature Selection (Optional but Recommended for High-Dimensional Data):

- Perform differential expression analysis (e.g., DESeq2, edgeR) on the training set.

- Select top N (e.g., 500-1000) most significantly differentially expressed genes as features.

Model Training & Validation:

- Train a classifier (e.g., Random Forest or Support Vector Machine) on the training set using the selected features.

- Tune hyperparameters (e.g.,

mtryfor RF,C&gammafor SVM) via grid/random search on the validation set, optimizing for accuracy or F1-score. - Retrain the model with optimal parameters on the combined training+validation set.

Model Evaluation:

- Apply the final model to the held-out test set.

- Report performance metrics: Confusion Matrix, Accuracy, Precision, Recall, F1-Score, and ROC-AUC.

Biological Interpretation:

- Extract feature importance scores from the model (e.g., Gini importance from RF).

- Perform pathway enrichment analysis (e.g., using g:Profiler, PlantGSEA) on high-importance genes to derive biological insights.

Protocol 2: Unsupervised Learning for Discovery of Metabolic Subtypes

Objective: To identify distinct metabolic profiles (chemotypes) in a population of plant extracts using untargeted metabolomics data.

Materials & Input Data:

- Metabolomics Dataset: Peak intensity matrix (metabolic features x samples), pre-aligned and normalized.

- Metadata: Sample information (e.g., genotype, organ).

- Software: Python (scikit-learn, umap-learn) or R (stats, factoextra, umap).

Procedure:

- Data Preprocessing & Scaling:

- Apply missing value imputation (e.g., k-NN imputation or replace with half-minimum).

- Standardize the data (z-score normalization) so each metabolic feature has zero mean and unit variance.

Dimensionality Reduction (Visualization):

- Perform Principal Component Analysis (PCA) to assess overall variance and check for major batch effects.

- Apply non-linear dimensionality reduction (e.g., UMAP) with 2-3 components for visualization. Use biological replicates to inform parameter tuning (

n_neighbors,min_dist).

Clustering Analysis:

- On the UMAP embeddings or principal components (PCs), perform density-based clustering (e.g., HDBSCAN) or partition-based clustering (e.g., k-means, PAM).

- Determine optimal cluster number using the elbow method (for inertia/WSS) or average silhouette width.

Cluster Validation & Annotation:

- Compute cluster stability metrics (e.g., via bootstrapping).

- Statistically test for differential abundance of metabolic features between clusters (Kruskal-Wallis test).

- Annotate discriminating features using metabolomics databases (e.g., KEGG, PlantCyc, GNPS). Correlate clusters with sample metadata.

Downstream Analysis:

- Perform biomarker analysis to identify key metabolites defining each cluster.

- Map these metabolites onto biochemical pathways to infer the underlying biological processes for each novel chemotype.

Visualizations

Supervised Learning Workflow for Phenotype Prediction

Unsupervised Learning Workflow for Novel State Discovery

Decision Tree for Choosing ML Approach in Plant Omics

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials & Tools for ML-Based Plant Multi-Omics Analysis

| Item | Category | Function & Relevance |

|---|---|---|

| RNA/DNA Extraction Kits (e.g., Qiagen RNeasy, NucleoSpin) | Wet-Lab Reagent | High-quality nucleic acid isolation is the foundational step for genomic/transcriptomic sequencing, providing the raw input data. |

| LC-MS/MS System | Analytical Instrument | Generates high-resolution metabolomic and proteomic data, the complex datasets for unsupervised pattern discovery. |

| Next-Generation Sequencer (e.g., Illumina NovaSeq) | Analytical Instrument | Produces genome-scale sequencing data (RNA-Seq, WGS) for supervised model training on genotypes/phenotypes. |

| scikit-learn (Python library) | Software Tool | Provides robust, unified implementations of both supervised (RF, SVM) and unsupervised (PCA, k-means) algorithms. |

R tidyverse & caret/tidymodels |

Software Tool | Enables reproducible data wrangling, visualization, and model training/fitting within the R ecosystem. |

| Uniform Manifold Approximation and Projection (UMAP) | Algorithm | State-of-the-art non-linear dimensionality reduction technique crucial for visualizing high-dimensional omics data. |

| Plant-Specific Databases (e.g., PlantGSEA, PlantCyc, Phytozome) | Bioinformatics Resource | Essential for the biological interpretation of model outputs (feature importance, cluster biomarkers) within a plant context. |

| High-Performance Computing (HPC) Cluster or Cloud Credit | Computational Resource | Necessary for processing large multi-omics datasets and training computationally intensive models (e.g., deep learning). |

Within plant multi-omics research, the initial phases of data handling are foundational for the successful application of machine learning (ML). This protocol outlines the rigorous processes for curating heterogeneous omics datasets, applying appropriate normalization, and defining biologically relevant features for predictive modeling in plant biology and drug discovery.

Data Curation Protocol

Curation transforms raw, disparate data into a structured, analysis-ready resource.

Multi-Omic Data Acquisition and Assembly

Objective: To compile a unified dataset from genomic, transcriptomic, proteomic, and metabolomic sources. Protocol:

- Source Identification: Retrieve data from public repositories (see Table 1).

- Metadata Annotation: For each sample, compile a minimum metadata set: Species, Tissue, Developmental Stage, Treatment/Condition, Replicate ID, Sequencing/Platform Type.

- Data Harmonization: Map all gene, protein, or metabolite identifiers to a common database (e.g., UniProt, TAIR, PlantCyc IDs) using batch retrieval tools.

- Missing Data Audit: Report the percentage of missing values per feature (gene/protein/metabolite) and per sample. Implement a tiered removal strategy:

- Remove features with >20% missingness across all samples.

- Remove samples with >30% missingness across all features.

- Creation of Curation Log: Document all decisions, including sources, identifier mapping rates, and samples/features removed.

Table 1: Key Plant Multi-Omic Data Repositories

| Repository | Data Type | Primary Focus | Access Link |

|---|---|---|---|

| NCBI GEO | Transcriptomics, Epigenomics | Gene expression, methylation | https://www.ncbi.nlm.nih.gov/geo/ |

| EMBL-EBI ENA | Genomics, Metagenomics | Raw sequence data | https://www.ebi.ac.uk/ena |

| PRIDE | Proteomics | Mass spectrometry data | https://www.ebi.ac.uk/pride/ |

| MetaboLights | Metabolomics | Metabolite profiles | https://www.ebi.ac.uk/metabolights/ |

| Plant Reactome | Pathway Data | Curated plant pathways | https://plantreactome.gramene.org/ |

Quality Control (QC) Assessment

Objective: To ensure technical reliability before downstream analysis. Protocol for RNA-Seq Data (Example):

- Run

FastQCon raw sequence files (fastq). - Assess per-base sequence quality, adapter contamination, and overrepresented sequences.

- Trim low-quality bases and adapters using

Trimmomaticorfastp. - Align cleaned reads to a reference genome (e.g., using

HISAT2for plants). - Generate alignment statistics (% mapped reads, coverage uniformity) using

SAMtools. - QC Threshold: Exclude samples with mapping rates < 70% or extreme global expression outliers identified via Principal Component Analysis (PCA).

Data Normalization Methodology

Normalization removes non-biological variation to enable accurate cross-sample comparison.

Selection of Normalization Technique

The method is chosen based on data type and inherent assumptions.

Table 2: Normalization Methods for Plant Omics Data

| Data Type | Recommended Method | Algorithm/R Package | Rationale |

|---|---|---|---|

| RNA-Seq (Counts) | DESeq2's Median of Ratios | DESeq2::estimateSizeFactors |

Accounts for library size and RNA composition bias. |

| Microarray | Quantile Normalization | limma::normalizeBetweenArrays |

Forces all sample distributions to be identical, robust for many samples. |

| Proteomics (Label-Free) | Variance Stabilizing Normalization (VSN) | vsn::vsn |

Stabilizes variance across the dynamic range of MS intensity data. |

| Metabolomics | Probabilistic Quotient Normalization (PQN) | pmp::pqn_normalise |

Corrects for dilution/concentration differences using a reference sample spectrum. |

Protocol: DESeq2 Normalization for Transcriptomics

Input: Raw count matrix (genes x samples). Steps:

- Construct a

DESeqDataSetobject from the count matrix and sample metadata. - Calculate sample-specific size factors:

dds <- estimateSizeFactors(dds).- Internal Calculation: For each sample, the geometric mean is calculated for each gene. The size factor is the median of the ratios of each gene's count to its geometric mean.

- Retrieve normalized counts:

normalized_counts <- counts(dds, normalized=TRUE). - Validate normalization by visualizing the reduction in the correlation between sample counts and size factors pre- and post-normalization.

Feature Definition and Engineering

This step transforms normalized data into predictive variables (features) for ML models.

Protocol: Defining Pathway-Based Features

Objective: Move from individual gene/protein expression to functionally cohesive features. Steps:

- Pathway Mapping: Map normalized gene/protein identifiers to plant-specific pathways (e.g., from Plant Reactome or KEGG) using annotation databases.

- Activity Scoring: Calculate a single activity score for each pathway per sample.

- Common Method: Single Sample Gene Set Enrichment Analysis (ssGSEA) using the

GSVAR package. - Command:

pathway_activity_scores <- gsva(normalized_expression_matrix, plant_pathway_list, method="ssgsea")

- Common Method: Single Sample Gene Set Enrichment Analysis (ssGSEA) using the

- Result: A new feature matrix (samples x pathway activities) with reduced dimensionality and enhanced biological interpretability.

Protocol: Handling Co-Feeding Data (e.g., Metabolomics & Proteomics)

Objective: Integrate different data layers into composite features. Steps:

- Pairwise Correlation: For a given metabolic reaction, calculate pairwise correlations between the abundance of the enzyme (proteomics) and its substrate/product (metabolomics) across all samples.

- Define Reaction Flux Score: For each sample, compute a z-score for the enzyme and metabolite levels. Define a reaction flux proxy feature as the product of these z-scores:

Flux_proxy = Z_enzyme * Z_metabolite. - Validation: This engineered feature should correlate strongly with direct flux measurements (if available) or show differential activity between treatment and control groups.

Workflow: From Raw Data to ML-Ready Features

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents and Tools for Plant Multi-Omics Sample Prep

| Item | Function in Workflow | Example Product/Kit |

|---|---|---|

| Polysorbate mRNA Capture Beads | Isolation of high-integrity mRNA from polysaccharide-rich plant tissues. | NEBNext Poly(A) mRNA Magnetic Isolation Module. |

| Plant-Specific Lysis Buffer | Effective disruption of tough plant cell walls and inhibition of endogenous RNases/PNases. | QIAGEN RLT Plus Buffer with β-mercaptoethanol. |

| Phosphatase/Protease Inhibitor Cocktail (Plant) | Preserves post-translational modification states (e.g., phosphorylation) during protein extraction. | Thermo Scientific Halt Protease & Phosphatase Inhibitor Cocktail. |

| Internal Standard Spike-Ins (Metabolomics) | Corrects for technical variance in mass spectrometry; isotope-labeled plant metabolites. | Isobaric tags for relative and absolute quantitation (iTRAQ), or custom 13C-labeled compound mixes. |

| UMI Adapters (RNA-Seq) | Unique Molecular Identifiers to correct for PCR amplification bias in low-input samples. | Illumina Stranded mRNA UMI Kits. |

| Cross-Linking Reagent (ChIP-Seq) | For protein-DNA interaction studies (e.g., transcription factor binding). | Formaldehyde (for in vivo crosslinking) or DSG (Disuccinimidyl glutarate). |

Exploratory Data Analysis (EDA) Techniques for Multi-Omics Visualization

Within the thesis Machine Learning for Plant Multi-Omics Data Analysis Research, Exploratory Data Analysis (EDA) serves as the critical first step to uncover patterns, detect anomalies, and formulate hypotheses from complex biological datasets. Multi-omics integration—combining genomics, transcriptomics, proteomics, and metabolomics—presents unique challenges due to data heterogeneity, scale, and noise. Effective EDA visualization techniques are paramount for discerning biological signals, guiding subsequent machine learning model selection, and informing experimental validation in plant science and agricultural drug development.

Core EDA Visualization Techniques & Protocols

Protocol: t-Distributed Stochastic Neighbor Embedding (t-SNE)

- Objective: Visualize high-dimensional multi-omics sample clustering in 2D/3D to identify batch effects, biological subgroups, or outliers.

- Procedure:

- Input: A merged feature matrix (samples x features) from normalized omics datasets (e.g., gene expression + metabolite abundances).

- Perplexity Tuning: Set perplexity parameter (typically 5-50). For plant studies with distinct tissues, start with a lower value (~10).

- Run t-SNE: Use

sklearn.manifold.TSNE. Setn_components=2,random_statefor reproducibility. Iterate over multiple perplexities. - Visualization: Scatter plot colored by known metadata (e.g., tissue type, treatment, genotype).

- Interpretation: Assess cluster cohesion and separation. Outliers may indicate poor sample quality or novel biological states.

Table 1: Comparison of Dimensionality Reduction Techniques

| Technique | Key Principle | Best For Multi-Omics EDA When... | Computational Load | ML Readiness |

|---|---|---|---|---|

| PCA | Linear variance maximization | Assessing overall variance, detecting strong batch effects. | Low | High (features usable) |

| t-SNE | Preserves local neighborhoods | Visualizing clear cluster separation among samples. | Medium | Low (output for viz only) |

| UMAP | Balances local/global structure | Needing scalable, reproducible layouts for large cohorts. | Medium-High | Medium |

Correlation Network Analysis

Protocol: Constructing a Feature-Feature Interaction Network

- Objective: Identify strongly correlated features (e.g., genes-metabolites) across omics layers to hypothesize functional relationships.

- Procedure:

- Compute Pairwise Correlations: Calculate Spearman's rank correlation between all pairs of selected key features across omics types.

- Thresholding: Apply a significance (p < 0.01) and magnitude (|ρ| > 0.8) filter to create an adjacency matrix.

- Network Construction: Use

networkxin Python. Nodes = features, edges = significant correlations. - Layout & Visualization: Use a force-directed layout (e.g., Fruchterman-Reingold). Color nodes by omics type (e.g., genomics=blue, metabolomics=red).

- Analysis: Identify hub nodes (high degree centrality) as potential key regulators in plant pathways.

Diagram 1: Workflow for building a multi-omics correlation network.

Parallel Coordinates for Multi-Omics Profile Inspection

Protocol: Visualizing Integrated Sample Profiles

- Objective: Compare the coordinated omics response of individual samples or sample groups across selected features.

- Procedure:

- Feature Selection: Choose 10-20 highly variable or biologically relevant features from each omics dataset.

- Data Scaling: Min-max normalize each feature column to a common range (e.g., 0-1).

- Plot Setup: Create parallel axes, each representing one selected feature.

- Plotting: Draw each sample's profile as a connected line across all axes. Use alpha blending for clarity.

- Interpretation: Look for distinct profile shapes grouping by phenotype. Crossing lines indicate divergent molecular responses.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Multi-Omics EDA in Plant Research

| Item | Function in Multi-Omics EDA | Example/Note |

|---|---|---|

| RStudio/Python Jupyter | Interactive development environment for scripting EDA analyses. | Essential for reproducible analysis notebooks. |

| scikit-learn (Python) | Provides PCA, t-SNE, UMAP, and other preprocessing/ML tools. | sklearn.manifold, sklearn.decomposition. |

| ggplot2/Plotly (R/Python) | Creates publication-quality static & interactive visualizations. | ggplot2 for PCA biplots; plotly.express for 3D scatter. |

| MixOmics (R) | Specialist package for multivariate analysis of multi-omics data. | Offers sPLS-DA, DIABLO for integrative analysis. |

| Cytoscape | Platform for advanced network visualization and analysis. | Import correlation networks for GUI-based exploration. |

| MetaCyc Plant Pathway DB | Curated database of plant metabolic pathways for annotation. | Critical for interpreting metabolomics/proteomics hubs. |

Integrated Workflow for Plant Multi-Omics EDA

The following protocol outlines a complete EDA session for integrated transcriptomics and metabolomics data from a plant stress response study.

Diagram 2: A standard integrated EDA workflow for plant multi-omics data.

Detailed Protocol Steps:

- Data Loading & QC: Load transcript (RNA-seq count matrix) and metabolite (peak intensity table) data. Filter transcripts by expression (>1 CPM in >50% samples), remove low-variance metabolites.

- Normalization: Apply TMM normalization (transcripts) and Pareto scaling (metabolites). Log-transform as appropriate.

- Data Integration: Merge matrices by sample ID. Use common sample indexing.

- Unsupervised Exploration: Perform PCA on the integrated matrix. Plot PC1 vs PC2, colored by treatment and tissue. Calculate variance contribution.

- Supervised Comparison: For key contrast (e.g., drought vs control), compute differential expression/abundance. Select top 50 significant features per omics layer.

- Cross-Omics Correlation: Calculate Spearman correlation between the selected transcript and metabolite features. Filter (|ρ|>0.7, p.adj<0.05).

- Visualization: Create a bipartite network (Cytoscape) and a clustered heatmap of the correlation matrix.

- Annotation & Hypothesis: Annotate hub genes (e.g., transcription factors) and hub metabolites (e.g., phytohormones). Overlay onto pathway maps (e.g., phenylpropanoid biosynthesis). Formulate testable hypotheses for random forest/network-based ML analysis.

Building Predictive Models: ML Techniques for Trait Prediction and Gene Discovery

Within the broader thesis on Machine learning for plant multi-omics data analysis research, the integration of diverse data modalities—such as genomics, transcriptomics, proteomics, and metabolomics—is paramount. This document outlines detailed Application Notes and Protocols for three canonical data fusion strategies: Early, Intermediate, and Late Fusion. These protocols are designed to enable researchers and drug development professionals to derive holistic, systems-level insights from plant multi-omics datasets, crucial for understanding complex traits, stress responses, and bio-compound synthesis.

Table 1: Comparative Analysis of Multi-Omics Data Fusion Strategies

| Feature | Early Fusion (Feature-Level) | Intermediate Fusion (Joint Learning) | Late Fusion (Decision-Level) |

|---|---|---|---|

| Integration Point | Raw or pre-processed features concatenated before model input. | During model processing using architectures enabling cross-omics interaction. | After separate omics-specific models make predictions. |

| Key Advantage | Simple; allows direct feature correlation discovery. | Captures complex, non-linear interactions between modalities. | Leverages optimal models for each data type; robust to missing modalities. |

| Key Limitation | Highly susceptible to noise and curse of dimensionality. | Architecturally complex; requires careful tuning. | Misses low-level inter-omics interactions. |

| Typical Model | PCA on concatenated matrix; Standard MLPs or RF. | Multi-modal neural networks, Cross-attention mechanisms, Graph Neural Networks. | Weighted averaging, Voting, Stacking of separate model outputs. |

| Data Requirement | All omics samples must be fully paired and aligned. | Can handle partially aligned or unaligned samples with specific architectures. | Can easily handle unpaired data across modalities. |

| Use Case Example | Identifying co-regulated gene-metabolite modules under drought stress. | Predicting complex phenotypes from linked but heterogeneous omics layers. | Integrating legacy genomic data with newly acquired metabolomic profiles. |

Experimental Protocols

Protocol 3.1: Early Fusion for Plant Stress Response Profiling

Aim: To identify a unified biomarker signature for heat shock response in Arabidopsis thaliana by integrating transcriptomic and metabolomic features.

Materials: (See Scientist's Toolkit, Section 5)

- RNA-Seq data (TPM values) from leaf tissue, control vs. heat shock (42°C, 2hr).

- LC-MS metabolomics data (peak intensities) from the same samples.

- Software: Python (Pandas, NumPy, scikit-learn), R.

Procedure:

- Pre-processing & Normalization:

- Transcriptomics: Filter low-expression genes (TPM > 1 in >50% samples). Apply log2(TPM+1) transformation. Standardize (z-score) per gene.

- Metabolomics: Apply pareto scaling to peak intensity data. Impute missing values with k-Nearest Neighbors (k=5).

- Feature Concatenation: Align samples by Plant ID. Horizontally stack the processed transcriptomic matrix (T x N) and metabolomic matrix (M x N) to create a unified feature matrix ([T+M] x N), where N is the number of samples.

- Dimensionality Reduction: Apply Principal Component Analysis (PCA) to the concatenated matrix to reduce to 50 principal components (PCs).

- Supervised Modeling: Use the 50 PCs as input to a Random Forest classifier to predict condition (Control vs. Heat Shock). Use 5-fold cross-validation.

- Biomarker Extraction: Rank integrated features (genes + metabolites) based on Random Forest feature importance. Validate top candidates via pathway over-representation analysis (e.g., using PlantCyc).

Protocol 3.2: Intermediate Fusion using Cross-Attention Networks

Aim: To predict flavonoid biosynthesis yield in Medicago truncatula cell cultures by modeling interactions between transcriptome and proteome.

Materials:

- Paired RNA-Seq and LC-MS/MS (Label-Free Quantification) data from cultures under varying elicitation conditions.

- Software: Python, PyTorch or TensorFlow.

Procedure:

- Omics-Specific Encoding:

- Pass transcriptomic data through a dedicated fully-connected neural network (FCNN) to generate an embedding vector

E_t. - Pass proteomic data through a separate FCNN to generate an embedding vector

E_p.

- Pass transcriptomic data through a dedicated fully-connected neural network (FCNN) to generate an embedding vector

- Cross-Attention Integration:

- Compute cross-attention scores to allow

E_tto attend toE_pand vice-versa, generating context-aware representations. - Fuse these contextualized representations via concatenation or element-wise addition.

- Compute cross-attention scores to allow

- Joint Prediction: Pass the fused representation through a final FCNN regressor to predict quantitative flavonoid yield (μg/g DW).

- Model Training & Interpretation:

- Train end-to-end using Mean Squared Error loss.

- Use gradient-based attribution methods (e.g., Integrated Gradients) on the attention layers to identify key interacting transcript-protein pairs influencing yield.

Protocol 3.3: Late Fusion for Multi-Omics Disease Resistance Prediction

Aim: To classify soybean genotypes as resistant or susceptible to Phytophthora sojae by integrating predictions from independent genomic and metabolomic models.

Materials:

- Genomic SNP data (from SoySNP50K array) and root metabolomic (GC-MS) data from infected plants. Datasets are from overlapping but not perfectly matched genotypes.

- Software: scikit-learn, XGBoost.

Procedure:

- Train Unimodal Models:

- Genomic Model: Train an XGBoost classifier on SNP data (encoded as 0,1,2) to predict resistance status.

- Metabolomic Model: Train a separate XGBoost classifier on standardized metabolite peak data.

- Generate Decision-Level Outputs: For each sample with both data types, obtain prediction probabilities

P_resistant(Genomics)andP_resistant(Metabolomics)from the respective models. - Fusion & Meta-Learning:

- Create a new dataset where features are the two prediction probabilities and the target is the true label.

- Train a logistic regression "meta-model" on this new dataset to learn the optimal weights for combining the unimodal predictions. Example learned rule:

Final Score = 0.6*P_genomics + 0.4*P_metabolomics.

- Evaluation: Evaluate the final fused classifier on a held-out test set using AUC-ROC.

Visualizations

Title: Early vs Late Fusion Workflow Comparison

Title: Intermediate Fusion via Cross-Attention Mechanism

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions for Plant Multi-Omics Integration

| Item / Solution | Function in Multi-Omics Integration |

|---|---|

| Tri-Reagent or Qiagen RNeasy Kit | Simultaneous extraction of high-quality RNA, DNA, and protein from a single plant tissue sample, ensuring perfect sample pairing for fusion. |

| Methanol:Chloroform (3:1 v/v) | Standard solvent for metabolite extraction from plant tissues, compatible with subsequent GC-MS or LC-MS analysis. |

| Deuterated Internal Standards (e.g., D-Glucose-d7, Succinic Acid-d4) | Added during metabolomics extraction for mass spectrometry data normalization, enabling quantitative cross-sample comparison. |

| Bradford or BCA Assay Kit | For accurate quantification of total protein concentration post-extraction, required for normalizing proteomics samples prior to LC-MS/MS. |

| DNase I (RNase-free) | Treatment of RNA extracts to remove genomic DNA contamination, crucial for clean transcriptomic (RNA-Seq) data generation. |

| Phase Lock Gel Tubes | Facilitates clean separation of organic and aqueous phases during combined omics extractions, improving yield and purity. |

| SPE Cartridges (C18, HILIC) | Solid-Phase Extraction used to clean-up and fractionate complex plant metabolite extracts pre-MS, reducing ion suppression. |

| Stable Isotope Labeled (SIL) Peptide Standards | Spiked into protein digests for absolute quantification in targeted proteomics (e.g., SRM), allowing precise integration with other omics. |

| Plant Tissue Lysis Beads (e.g., Zirconia/Silica) | For efficient mechanical disruption of tough plant cell walls in a bead mill homogenizer, ensuring complete macromolecule release. |

Within the broader thesis on machine learning for plant multi-omics data analysis, this document provides detailed application notes and protocols for three prominent supervised learning algorithms used for phenotype prediction: Random Forests (RF), Gradient Boosting Machines (GBM), and Support Vector Machines (SVM). The accurate prediction of complex plant phenotypes—such as yield, stress resistance, or metabolite production—from high-dimensional multi-omics data (genomics, transcriptomics, proteomics, metabolomics) is critical for accelerating crop improvement and biopharmaceutical development.

Table 1: Algorithm Comparison for Plant Phenotype Prediction

| Feature | Random Forest (RF) | Gradient Boosting (e.g., XGBoost) | Support Vector Machine (SVM) |

|---|---|---|---|

| Core Principle | Ensemble of decorrelated decision trees via bagging | Ensemble of sequential trees correcting prior errors (boosting) | Finds optimal hyperplane maximizing margin between classes |

| Handling High-Dim. Data | Excellent; built-in feature importance | Excellent; can handle sparse data | Requires careful feature selection; kernel trick helps |

| Typical Accuracy (Recent Benchmarks) | 82-89% (e.g., drought tolerance prediction) | 85-92% (often state-of-the-art) | 78-86% (depends heavily on kernel choice) |

| Overfitting Tendency | Low (due to bagging) | Moderate-High (requires tuning) | Moderate (regularization parameter key) |

| Interpretability | Moderate (feature importance) | Moderate (feature importance) | Low (black-box with kernels) |

| Training Speed | Fast (parallelizable) | Slower (sequential) | Slow for large datasets |

| Key Hyperparameters | nestimators, maxdepth, max_features | nestimators, learningrate, max_depth | C (regularization), gamma, kernel type |

Note: Performance metrics are generalized from recent (2023-2024) studies on genomic prediction of traits in *Arabidopsis, maize, and wheat. Actual values are dataset-specific.*

Experimental Protocols

Protocol 1: End-to-End Workflow for Omics-Based Phenotype Prediction

This protocol outlines the standard pipeline for developing a supervised learning model for a binary trait (e.g., resistant vs. susceptible to a pathogen).

1. Sample Preparation & Omics Data Generation:

- Plant Material: Grow a genetically diverse panel or mapping population under controlled conditions. Apply treatment (e.g., pathogen inoculation, drought) as needed.

- Omics Profiling: Perform genotyping-by-sequencing (GBS) for SNPs, RNA-Seq for transcriptomics, or LC-MS for metabolomics. Ensure appropriate biological replicates.

- Phenotyping: Quantify the target trait with high precision (e.g., disease scoring, biomass measurement, metabolite concentration).

2. Data Preprocessing & Feature Engineering:

- Genomic Data: Filter SNPs for minor allele frequency (>5%) and call rate (>90%). Encode as 0 (homozygous ref), 1 (heterozygous), 2 (homozygous alt).

- Transcriptomic/Metabolomic Data: Apply log-transformation, normalize (e.g., TPM for RNA, sum normalization for metabolites), and impute missing values (e.g., k-NN imputation).

- Feature Selection: For high-dimensional data, apply univariate (ANOVA F-value) or recursive feature elimination to reduce dimensionality before SVM.

3. Model Training & Validation (Critical Step):

- Split data into training (70%), validation (15%), and hold-out test (15%) sets. Preserve class ratios (stratified split).

- For RF/GBM: Use the validation set for hyperparameter tuning via grid or random search. Key metrics: AUC-ROC for binary traits, RMSE for continuous.

- For SVM: Scale all features to zero mean and unit variance. Tune

Candgammavia cross-validation on the training set. - Train final model on combined training+validation set.

4. Model Evaluation & Interpretation:

- Evaluate final model on the untouched test set. Report accuracy, precision, recall, F1-score, and AUC.

- Interpretation: Extract and visualize Gini importance (RF) or gain-based importance (GBM). For SVM with linear kernel, examine coefficient magnitudes.

Protocol 2: Comparative Benchmarking Experiment

A standardized protocol to compare RF, GBM, and SVM on the same dataset.

Materials:

- Dataset: Publicly available plant multi-omics dataset with a clear phenotypic label (e.g., IRRI's Rice SNP Dataset, AraPheno).

- Software: Python (scikit-learn, XGBoost, pandas) or R (caret, xgboost, e1071).

Procedure:

- Load and preprocess data as per Protocol 1, Step 2.

- Implement 5-fold stratified cross-validation scheme.

- For each algorithm, run a predefined hyperparameter search in each cross-validation fold:

- RF:

n_estimators: [100, 200, 500];max_depth: [5, 10, None]. - GBM:

n_estimators: 100;learning_rate: [0.01, 0.1];max_depth: [3, 5]. - SVM:

C: [0.1, 1, 10];gamma: ['scale', 'auto']; kernel: ['linear', 'rbf'].

- RF:

- Record the mean and standard deviation of the cross-validation AUC for each algorithm.

- Train final models with best params on full training set. Evaluate on a single, held-out test set.

- Perform statistical testing (e.g., paired t-test on CV folds) to determine if performance differences are significant.

Visualization

Supervised Learning Workflow for Phenotype Prediction

Algorithm Comparison for Omics Data Input

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions & Materials

| Item | Function in Phenotype Prediction Pipeline | Example/Note |

|---|---|---|

| High-Throughput Sequencer | Generate genomic (DNA-Seq) or transcriptomic (RNA-Seq) raw data. | Illumina NovaSeq, PacBio Sequel II. Essential for feature generation. |

| Mass Spectrometer | Generate proteomic or metabolomic profile data. | LC-MS/MS systems (e.g., Thermo Q-Exactive). Quantifies non-genomic molecular traits. |

| DNA/RNA Extraction Kit | High-quality nucleic acid isolation from plant tissue. | Must be optimized for specific tissue (leaf, root, seed). Purity critical for sequencing. |

| Normalization & Imputation Software | Preprocess raw omics data into analyzable matrices. | R/Bioconductor packages (DESeq2, limma), Python (scikit-learn SimpleImputer). |

| Machine Learning Library | Implement RF, GBM, SVM algorithms with efficient computation. | Python: scikit-learn, XGBoost, LightGBM. R: caret, tidymodels. |

| High-Performance Computing (HPC) Cluster | Handle computationally intensive model training and hyperparameter tuning. | Necessary for large-scale omics data (n>1000, p>10,000). Cloud solutions (AWS, GCP) are alternatives. |

| Benchmarked Public Dataset | For method validation and comparative benchmarking. | Resources like AraPheno (Arabidopsis), Rice SNP-Seek Database, Panzea (Maize). |

Application Notes for Plant Multi-Omics Analysis

Unsupervised learning is foundational for exploring high-dimensional plant multi-omics data (genomics, transcriptomics, proteomics, metabolomics) without a priori labels. It enables hypothesis generation, batch effect detection, and the discovery of novel metabolic pathways or stress-response clusters.

Quantitative Comparison of Dimensionality Reduction Techniques

The following table summarizes key characteristics of PCA, t-SNE, and UMAP for plant omics data.

Table 1: Comparison of Dimensionality Reduction Methods in Plant Omics

| Feature | PCA | t-SNE | UMAP |

|---|---|---|---|

| Primary Goal | Maximize variance; linear projection | Preserve local pairwise distances; non-linear | Preserve local & global structure; non-linear |

| Computational Speed | Very Fast (O(n³) for full SVD, O(p²) for components) | Slow (O(n²)) | Faster than t-SNE (O(n¹.²)) |

| Scalability | Excellent for large n (samples) | Poor for >10,000 samples | Good for large datasets |

| Preserved Structure | Global covariance structure | Local neighbor relationships (perplexity-sensitive) | Local connectivity & approximate global structure |

| Deterministic | Yes | No (random initialization) | Largely reproducible with fixed random seed |

| Typical Use in Plant Research | Initial data QC, batch correction, noise filtering | Visualizing cell-types or treatment clusters in scRNA-seq | Integrated multi-omics visualization, trajectory inference |

| Key Hyperparameter | Number of components | Perplexity (~5-50), learning rate | n_neighbors (∼5-50), min_dist (∼0.1-0.5) |

| Data Type Suitability | All omics types; linear relationships | Metabolite profiles, single-cell data | Complex integrative maps, large-scale genotyping |

Experimental Protocols

Protocol 2.1: Pre-processing Pipeline for Plant Multi-Omics Clustering

Objective: To standardize raw multi-omics data for robust unsupervised analysis. Input: Raw count matrices (RNA-seq), peak intensities (MS-based proteomics/metabolomics), or variant calls. Output: Normalized, scaled, and batch-corrected feature matrix.

- Quality Control & Filtering:

- Transcriptomics/Proteomics: Remove genes/proteins with zero counts in >90% of samples. For RNA-seq, apply a count-per-million (CPM) or reads-per-kilobase-million (RPKM) filter.

- Metabolomics: Remove features with >30% missing values. Impute remaining missing values using k-nearest neighbors (k=5) or minimum value imputation.

- Normalization:

- Between-Sample: Apply trimmed mean of M-values (TMM) for RNA-seq; median fold change for proteomics; probabilistic quotient normalization (PQN) for metabolomics.

- Variance Stabilization: Use log2 transformation (for RNA-seq with offset, e.g., log2(count+1)) or pareto scaling for metabolomics.

- Integration & Batch Correction:

- Use Harmony or ComBat to correct for technical batches (e.g., sequencing run, harvest day) while preserving biological variance. Apply after PCA (on top 50 PCs).

- Feature Selection (for high-dimensional data):

- Select top ~5000 highly variable genes (HVGs) using the

FindVariableFeaturesmethod (Seurat) or select metabolites with coefficient of variation >20%.

- Select top ~5000 highly variable genes (HVGs) using the

Protocol 2.2: Dimensionality Reduction and Cluster Validation Workflow

Objective: To project data into 2D/3D space and identify stable biological clusters. Input: Pre-processed feature matrix from Protocol 2.1. Output: Cluster assignments, visualization plots, and validation metrics.

- Dimensionality Reduction:

- PCA: Center the data. Perform PCA using singular value decomposition (SVD). Retain PCs explaining >80% cumulative variance.

- t-SNE: Use PCA output (first 30-50 PCs) as input. Set perplexity=30, learning rate=200, iterations=1000. Run multiple times with different seeds to assess stability.

- UMAP: Use same PCA input as t-SNE. Set

n_neighbors=15,min_dist=0.2,metric='euclidean'.

- Clustering:

- Apply k-means or Gaussian Mixture Models on PCA-reduced space, or use graph-based methods (e.g., Leiden, Louvain) on a k-nearest neighbor graph built from UMAP/PCA embeddings.

- Determine optimal clusters (k): Use the elbow method (within-cluster sum of squares), silhouette score, or gap statistic across a range of k (e.g., 2-10).

- Validation & Biological Interpretation:

- Stability: Use Jaccard similarity index on cluster assignments from bootstrapped sub-samples.

- Enrichment: Perform Gene Ontology (GO) or Kyoto Encyclopedia of Genes and Genomes (KEGG) pathway enrichment analysis for genes/proteins/metabolites in each cluster.

Diagrams

Diagram 1: Unsupervised Multi-Omics Analysis Workflow

Diagram 2: Comparative Model of PCA vs. UMAP Mechanism

The Scientist's Toolkit

Table 2: Essential Research Reagents & Tools for Unsupervised Plant Omics Analysis

| Item / Tool | Category | Function in Analysis |

|---|---|---|

| R (v4.3+) / Python (v3.10+) | Programming Language | Primary environment for statistical computing and algorithm implementation. |

| Seurat (R), Scanpy (Python) | Software Package | Integrated toolkit for single-cell (and bulk) omics quality control, normalization, clustering, and visualization. |

| FactoMineR & factoextra (R) | Software Package | Comprehensive PCA and multivariate analysis suite with enhanced visualization. |

| UMAP-learn (Python), uwot (R) | Algorithm Library | Efficient implementation of the UMAP algorithm for non-linear dimensionality reduction. |

| Harmony (R/Python) | Integration Tool | Fast integration of multiple datasets for batch correction without compromising biology. |

| cluster (R), scikit-learn (Python) | Algorithm Library | Provides essential clustering algorithms (k-means, hierarchical, DBSCAN) and validation metrics (silhouette). |

| MultiAssayExperiment (R), MuData (Python) | Data Structure | Container for synchronized multi-omics data, enabling integrative unsupervised analysis. |

| MetaboAnalystR | Software Package | Specialized toolkit for metabolomics data processing, normalization, and pattern discovery. |

| High-Performance Computing (HPC) Cluster | Infrastructure | Essential for processing large-scale omics datasets (e.g., thousands of plant single-cell libraries). |

| KEGG/PlantCyc Database | Biological Database | For functional annotation and pathway enrichment analysis of discovered clusters. |

In the thesis "Machine learning for plant multi-omics data analysis research," the integration of genomics, transcriptomics, proteomics, and metabolomics data presents a complex, high-dimensional challenge. Deep learning architectures—Convolutional Neural Networks (CNNs), Recurrent Neural Networks (RNNs), and Graph Neural Networks (GNNs)—are pivotal for extracting patterns from sequence and network-based omics data. These models enable the prediction of phenotypic traits, the identification of key genetic regulators, and the modeling of molecular interaction networks, accelerating crop improvement and phytochemical drug discovery.

Application Notes and Quantitative Comparison

The following table summarizes the core application, strengths, and data input types for each architecture within plant multi-omics research.

Table 1: Deep Learning Architectures for Plant Multi-Omics Data

| Architecture | Primary Data Type in Plant Omics | Key Applications | Typical Performance Metrics (Range from Recent Studies) |

|---|---|---|---|

| CNN | 1D Biological Sequences (DNA, Protein), 2D Spectra (MS, NMR) | Promoter region identification, Protein family classification, Spectral peak detection | Accuracy: 88-96% (Genomic sequence classification); AUC-ROC: 0.92-0.98 (TF binding site prediction) |

| RNN/LSTM/GRU | Time-series/Ordered Sequence Data (Gene expression time-courses, Metabolic pathways) | Dynamic gene expression forecasting, Metabolic flux prediction, Phenology modeling | RMSE: 0.15-0.30 (normalized expression forecasting); Sequence prediction accuracy: 85-94% |

| GNN | Network Data (Protein-Protein Interaction, Co-expression Networks, Metabolic Networks) | Gene function prediction, Prioritizing candidate genes, Integrative multi-omics analysis | Macro F1-Score: 0.78-0.91 (gene function prediction); AUPRC: 0.80-0.95 (disease gene identification) |

Experimental Protocols

Protocol 3.1: CNN for Plant Promoter Sequence Classification

Objective: Classify DNA sequences as promoter or non-promoter regions.

- Data Preparation: Obtain genomic sequences (e.g., from Arabidopsis thaliana TAIR). Extract 250bp upstream of transcription start sites (positive set) and random genomic fragments (negative set). One-hot encode sequences (A=[1,0,0,0], C=[0,1,0,0], etc.).

- Model Architecture: Implement a 1D CNN with:

- Input Layer: (250, 4)

- Conv1D Layers: Two layers with 64 and 128 filters, kernel size=6, ReLU activation.

- Pooling: MaxPooling1D after each Conv layer (pool_size=2).

- Dense Layers: Flatten layer, followed by Dense(128, ReLU), Dropout(0.5), and a final Dense(1, sigmoid) output.

- Training: Use binary cross-entropy loss, Adam optimizer (lr=0.001), batch size=32, train for 50 epochs with 20% validation split.

- Validation: Evaluate on held-out test set using Accuracy, Precision, Recall, and AUC-ROC.

Protocol 3.2: LSTM for Gene Expression Time-Series Forecasting

Objective: Predict future expression levels of stress-response genes.

- Data Preparation: Use RNA-seq time-course data (e.g., under drought stress). Normalize expression values (TPM) per gene using z-score. For each gene, create sequential samples with a look-back window of 5 time points to predict the 6th.

- Model Architecture: Implement a stacked LSTM:

- Input Layer: (lookback=5, numfeatures=1)

- LSTM Layers: Two LSTM layers with 50 and 100 units, return_sequences=True for the first.

- Dense Layers: TimeDistributed(Dense(25, ReLU)), Flatten(), Dense(1).

- Training: Use Mean Squared Error (MSE) loss, RMSprop optimizer. Train on 70% of series, use 15% for validation, 15% for testing.

- Validation: Assess using Root Mean Square Error (RMSE) and Mean Absolute Error (MAE) on the test set.

Protocol 3.3: GNN for Protein Function Prediction in Plants

Objective: Annotate unknown proteins in a Protein-Protein Interaction (PPI) network.

- Data Preparation: Construct a graph G=(V,E) from a plant PPI database (e.g., from STRING). Nodes V are proteins, annotated with multi-omics features (e.g., sequence embeddings from ProtCNN, expression profiles). Edges E are interactions. Split nodes into training/validation/test sets (70/15/15).

- Model Architecture: Implement a Graph Convolutional Network (GCN):

- Input: Node feature matrix X and normalized adjacency matrix Â.

- GCN Layers: Two GCNConv layers (from PyTorch Geometric) with ReLU activation and dropout (0.3). The first layer maps features to 256 dimensions, the second to 128.

- Readout & Classification: Global mean pooling, followed by a linear layer to the number of functional classes.

- Training: Use cross-entropy loss, Adam optimizer. Train for 200 epochs using only training set node labels.

- Validation: Evaluate on masked test nodes using Macro F1-Score and AUPRC.

Visualization Diagrams

Title: CNN Workflow for Plant Promoter Classification

Title: GNN for Protein Function Prediction Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools and Platforms for Implementing Deep Learning in Plant Multi-Omics

| Item | Category | Function in Research | Example/Provider |

|---|---|---|---|

| BioBERT (Plant-specific) | Pre-trained Model | Provides context-aware embeddings for biological text and gene sequences, improving downstream task performance. | Hugging Face Model Hub / AllenAI |

| PyTorch Geometric | Software Library | Specialized library for easy implementation of GNNs on irregular graph data like PPI networks. | PyG Team (pyg.org) |

| TensorFlow/Keras | Software Framework | High-level API for rapid prototyping of CNN and RNN models for sequence and spectral data. | |

| One-hot Encoding | Data Preprocessing | Converts categorical sequence data (DNA, protein) into a binary matrix format digestible by CNNs/RNNs. | Custom script / sklearn |

| Graphviz | Visualization Tool | Renders clear diagrams of neural network architectures and experimental workflows for publications. | Graphviz.org |

| CUDA-enabled GPU | Hardware | Accelerates the training of deep neural networks, which is essential for large omics datasets. | NVIDIA (e.g., RTX 4090, A100) |

| TPM/Normalized Counts | Processed Data | Standardized gene expression values required as clean input for time-series forecasting models (RNNs). | Output from RNA-seq pipelines (e.g., Salmon, Kallisto) |

| Plant PPI Database | Curated Data Source | Provides the foundational network structure (edges) for GNN-based protein function prediction. | STRING, PLAZA, Plant-GPA |

Application Note 1: Predicting Abiotic Stress Resistance inOryza sativa

Thesis Context: This case study demonstrates the application of a Random Forest Regressor model within a machine learning pipeline for analyzing transcriptomic and metabolomic data to predict composite stress resistance scores in rice.

Objective: To predict a quantitative stress resistance index (SRI) in rice cultivars using integrated omics data, enabling the prioritization of breeding lines for saline and drought-prone environments.

Data Integration & Model Pipeline:

- Data Sources: RNA-seq data (TPM values) and LC-MS-based metabolomic profiles (peak intensities) from leaf tissues of 150 rice varieties under control and stress conditions.

- Feature Engineering: Combined ~20,000 gene expression features and ~500 metabolite features. Dimensionality reduction was performed using sPLS-DA (mixOmics R package) to derive 50 latent components that maximize covariance between omics layers and the SRI.

- Model Training: A Random Forest model (scikit-learn) was trained on 70% of the data (105 varieties) using the 50 latent components as input features to predict the continuous SRI.

- Validation: The model was tested on the remaining 30% hold-out set (45 varieties). Performance was evaluated using R² and Root Mean Square Error (RMSE).

Quantitative Results:

Table 1: Model Performance Metrics for Stress Resistance Prediction

| Model | Training R² | Test R² | Test RMSE | Key Predictive Features (Top 5) |

|---|---|---|---|---|

| Random Forest | 0.92 ± 0.03 | 0.81 ± 0.05 | 0.89 | Proline, OsNAC6 exp., Raffinose, OsDREB2A exp., GABA |

Protocol: Integrated Omics Data Preprocessing for ML

Materials: RNA-seq raw count files, LC-MS raw peak area files, phenotypic SRI values, R environment with mixOmics, DESeq2, Python with pandas, scikit-learn.

Procedure:

- Transcriptomic Processing: Import raw counts into R. Normalize using DESeq2's median of ratios method. Transform to log2(TPM+1) for model stability.

- Metabolomic Processing: Import pre-aligned LC-MS peak table. Perform missing value imputation using k-Nearest Neighbors (k=5). Apply Pareto scaling (mean-centered and divided by the square root of the standard deviation).

- Data Integration: Using

mixOmics, create a combined data matrixXwith samples as rows and features from both omics as columns. The response vectorYis the SRI. - Dimensionality Reduction: Execute

splsda(X, Y, ncomp = 50, keepX = c(rep(200, 50)))to select 200 most relevant features per component. Extract the 50-component score matrix ($variates$X) as the final feature set for machine learning. - Model Implementation: In Python, train a RandomForestRegressor (nestimators=500, maxdepth=15) on the training set. Optimize hyperparameters via grid search on 5-fold cross-validation.

Diagram Title: ML Pipeline for Stress Resistance Prediction

Application Note 2: Mapping Metabolic Pathway Activity inSolanum lycopersicum

Thesis Context: This study employs a Graph Convolutional Network (GCN) to leverage the inherent graph structure of metabolic networks (KEGG) to predict pathway activity states from metabolomics data.

Objective: To move beyond individual metabolite markers and predict the systemic activity level (e.g., flux score) of key metabolic pathways, such as the flavonoid biosynthesis pathway, in tomato fruit under different growth conditions.

Model Architecture & Workflow:

- Graph Construction: The KEGG pathway for flavonoid biosynthesis (map00941) was represented as a directed graph where nodes are metabolites (KEGG compounds) and edges are enzymatic reactions.

- Node Feature Initialization: Each metabolite node was encoded with a feature vector derived from LC-MS relative abundance data.

- GCN Training: A two-layer GCN (PyTorch Geometric) was trained to propagate and transform node features across the network. The target was a pathway activity score derived from transcript levels of key pathway enzymes (e.g., CHS, F3H).

- Prediction: The model outputs a probability score for the pathway being "highly active," enabling classification of samples based on metabolic flux.

Quantitative Results:

Table 2: GCN Performance in Predicting Flavonoid Pathway Activity State

| Model | Accuracy | Precision | Recall | AUC-ROC |

|---|---|---|---|---|

| Graph CNN | 94.2% | 0.93 | 0.96 | 0.98 |

| Random Forest (Baseline) | 87.5% | 0.86 | 0.89 | 0.94 |

Protocol: Graph Construction & GCN Training for Metabolic Pathways

Materials: KEGG API or KGML file, Metabolite relative abundance matrix, Pathway activity labels (High/Low), Python with torch, torch_geometric, networkx, biokegg.

Procedure:

- Network Parsing: Use the

biokeggpackage to retrieve the KGML file for the target pathway. Parse the file to extract metabolite nodes and reaction edges. Create an adjacency matrix or edge index list for PyTorch Geometric. - Node Feature Assignment: Align your experimental metabolomics dataset (e.g., peak intensities for specific compounds) with the KEGG Compound IDs (C numbers) in the graph. Missing metabolites are assigned a zero vector. Normalize features per sample.

- Data Preparation: Format the graph data into a

Dataobject (PyTorch Geometric) with attributes:x(node features),edge_index(graph structure),y(pathway activity label per sample-graph). - GCN Model Definition: Define a GCN with two convolutional layers (

GCNConv) and ReLU activation. The first layer maps input features to 16 dimensions, the second to the number of output classes (2). A global mean pooling layer aggregates node features into a graph-level representation for classification. - Training Loop: Train the model using CrossEntropyLoss and the Adam optimizer for 200 epochs. Perform batch training if multiple samples/graphs are used.

Diagram Title: GCN on Metabolic Network for Pathway Prediction

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for Plant Multi-Omics ML Research

| Item | Function & Application |

|---|---|

| RNeasy Plant Mini Kit (Qiagen) | High-quality total RNA extraction for transcriptomics (RNA-seq, qPCR). Essential for generating gene expression feature data. |

| C18 Solid-Phase Extraction (SPE) Columns | Clean-up and fractionation of complex plant metabolite extracts prior to LC-MS, reducing matrix effects and improving data quality. |

| Iso-Seq Library Prep Kit (PacBio) | For generating full-length transcript sequences, improving genome annotation and providing accurate references for RNA-seq alignment in non-model species. |

| DIA-NN Software Package | Data-independent acquisition (DIA) mass spectrometry data processing. Enables reproducible, high-throughput proteomic and metabolomic feature extraction. |

| mixOmics R Package | Provides integrative multivariate methods (e.g., sPLS-DA, DIABLO) for dimension reduction and feature selection from multiple omics datasets, ideal for pre-processing before ML. |

| PyTorch Geometric Library | A specialized library for deep learning on graph-structured data. Critical for implementing GCNs on biological networks (e.g., metabolic, PPI). |

| Plant Preservative Mixture (PPM) | Prevents microbial contamination in plant tissue cultures, ensuring the integrity of samples destined for metabolomic and phenomic analysis. |

Solving Real-World Problems: Overcoming Data and Model Limitations in Plant ML

In plant multi-omics research, integrating genomics, transcriptomics, proteomics, and metabolomics data results in datasets where the number of features (p) vastly exceeds the number of samples (n). This high-dimensionality leads to overfitting, spurious correlations, and increased computational cost—the "Curse of Dimensionality." Two primary strategies to mitigate this are Feature Selection (FS) and Feature Extraction (FE). The choice between them depends on the research goal: biomarker discovery (prioritizing interpretability) vs. predictive model optimization (prioritizing performance).

Table 1: Comparative Analysis of Feature Selection vs. Feature Extraction

| Aspect | Feature Selection | Feature Extraction |

|---|---|---|

| Core Principle | Selects a subset of original features based on statistical importance. | Creates new, transformed features from original data via mathematical projection. |

| Interpretability | High. Retains biological meaning (e.g., gene GRMZM2G000123). | Low. New features (e.g., PC1) are composites without direct biological labels. |

| Primary Goal | Identify causal/mechanistic biomarkers; generate hypotheses. | Maximize variance or predictive signal; improve model performance. |

| Common Methods | ANOVA, LASSO, mRMR, Random Forest Importance. | PCA, PLS-DA, Autoencoders, t-SNE, UMAP. |

| Information Loss | Discards entire features deemed irrelevant. | Distributes information across new features; loss is controlled. |

| Best for Thesis Context | Identifying key genes/metabolites for drought resistance. | Classifying plant phenotypes from complex spectral or metabolomic data. |

Table 2: Performance Metrics on a Public Plant Omics Dataset (e.g., RNA-Seq for Stress Response)

| Method | Type | Number of Final Features | 5-Fold CV Accuracy (%) | Interpretability Score (1-5) |

|---|---|---|---|---|

| LASSO Logistic Regression | Feature Selection | 45 (genes) | 88.2 | 5 |

| Random Forest Feature Selection | Feature Selection | 60 (genes) | 86.7 | 4 |

| PCA + Logistic Regression | Feature Extraction | 15 (Principal Components) | 92.1 | 2 |

| PLS-DA | Feature Extraction | 10 (Latent Variables) | 93.5 | 3 |

| Full Dataset (10,000 features) | Baseline | 10000 | 65.4 (Overfit) | 1 |

Experimental Protocols