3D LiDAR in Plant Phenomics: Precision Measurement of Architecture for Enhanced Drug Discovery & Crop Yield

This article provides a comprehensive guide for researchers and drug development professionals on leveraging LiDAR technology for quantitative 3D plant architecture measurement.

3D LiDAR in Plant Phenomics: Precision Measurement of Architecture for Enhanced Drug Discovery & Crop Yield

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on leveraging LiDAR technology for quantitative 3D plant architecture measurement. We explore the foundational principles of LiDAR in plant science, detail methodological approaches for data acquisition and processing, address common challenges and optimization techniques, and critically compare LiDAR's performance against other phenotyping technologies. The goal is to empower scientists with the knowledge to implement robust, high-throughput phenotyping pipelines that can reveal novel plant traits and responses relevant to pharmaceutical discovery and agricultural biotechnology.

Demystifying LiDAR for Plant Science: Principles, Advantages, and Core Use Cases

Application Notes

Core Principles in Plant Phenotyping

LiDAR (Light Detection and Ranging) is an active remote sensing technology that measures distance by illuminating a target with laser light and analyzing the reflected signal. In plant architecture research, it enables non-destructive, high-throughput 3D quantification of structural traits.

Key Measurable Plant Traits:

- Canopy Height & Volume: Directly derived from point cloud extents.

- Leaf Area Index (LAI): Estimated from gap probability and point density.

- Plant Biomass: Correlated with volume metrics.

- Stem Diameter & Branching Angle: Extracted through cylinder fitting algorithms.

- Canopy Cover & Porosity: Calculated from penetration rates of laser pulses.

Quantitative Performance of LiDAR Systems

The selection of a LiDAR system depends on the scale and required resolution of the study.

Table 1: Comparison of LiDAR Platforms for Plant Research

| Platform | Typical Accuracy (Range) | Measurement Rate (pts/sec) | Best Application Scope | Approx. Cost Range (USD) |

|---|---|---|---|---|

| Terrestrial Laser Scanner (TLS) | ±1-3 mm | 100,000 - 2,000,000 | Single plant to plot scale, detailed architecture | \$20,000 - \$100,000+ |

| Mobile/Handheld Scanner | ±1-5 cm | 300,000 - 600,000 | In-field walking surveys, medium-scale plots | \$15,000 - \$50,000 |

| UAV-borne LiDAR | ±2-10 cm | 100,000 - 500,000 | Field-scale canopy structure, height mapping | \$10,000 - \$80,000 |

| Airborne LiDAR | ±10-50 cm | 50,000 - 250,000 | Regional-scale ecology, forest inventory | Service-based / \$100,000+ |

Table 2: Impact of Laser Wavelength on Plant Interaction

| Laser Wavelength (nm) | Penetration through Foliage | Typical Use Case | Key Advantage |

|---|---|---|---|

| 905 - 1550 (NIR) | Medium - High | Most terrestrial & UAV systems, biomass estimation | Good balance of eye safety, cost, and canopy penetration |

| 532 (Green) | Low | Bathymetric LiDAR; sometimes for canopy top mapping | High reflectivity from fresh leaves; visible spectrum. |

| 690 - 950 (Red Edge) | Low - Medium | Specialized plant health/trait scanners (e.g., Fluorosensors) | Can be tuned for chlorophyll fluorescence/absorption. |

Experimental Protocols

Protocol: TLS-based 3D Reconstruction of a Tree Architecture

Objective: To capture a highly detailed 3D point cloud of an individual tree for architectural parameter extraction (e.g., DBH, branch angles, leaf area density profile).

Materials:

- Terrestrial Laser Scanner (e.g., Faro Focus, Leica RTC360)

- Calibration targets (spheres or checkerboards)

- Laptop with scanning control software

- Dense foam pad or tripod

- Tape measure

- Processing software (e.g., CloudCompare, 3D Forest, AutoCAD Recap)

Procedure:

Pre-scan Planning:

- Clear understory debris around the target tree to minimize occlusion.

- Plan multiple scan positions (typically 4-8) encircling the tree to ensure full coverage from root collar to canopy apex. Positions should have 30-60% overlap in field of view.

Scanner Setup:

- Place the scanner on a stable, level surface using the foam pad or tripod at a distance capturing the full tree height (typically 1.5x tree height away).

- Power on the scanner and connect to the control laptop.

- Place 3-4 calibration targets in the scene, ensuring they are visible from multiple planned scan positions.

Data Acquisition:

- Initiate the first scan with the highest available resolution/quality settings (e.g., 1/4 or 1/8 resolution at 2x quality). Record scan.

- Move the scanner to the next pre-planned position. Ensure at least 3 calibration targets from the previous scan are visible.

- Repeat the scan. Continue until the tree is fully encircled.

Data Processing (Point Cloud Generation):

- Transfer all scan data to a processing workstation.

- Registration: Use the software's automatic target-based or cloud-to-cloud registration to align all individual scans into a single coordinate system.

- Cleaning: Manually remove clear outlier points (e.g., flying birds, passing insects) and non-tree points (e.g., distant buildings). Use selection and deletion tools.

- Downsampling (Optional): Apply a voxel grid filter (e.g., 1-5 mm leaf size) to reduce data volume while preserving structure if needed for analysis.

- Colorization: Apply RGB color from the scanner's camera (if available) to the point cloud for visualization.

Analysis (Architectural Metric Extraction):

- Stem Diameter: Isolate points from a 1.3m high stem segment. Fit a circle or cylinder using a RANSAC algorithm. Report diameter.

- Branching Angles: Manually select points defining a branch and its parent stem axis. Compute the angle between the derived vectors.

- Leaf Area Density: Slice the canopy into horizontal voxels (e.g., 10cm cubes). Calculate gap probability or contact frequency within each voxel to derive leaf area density profile.

Protocol: UAV-LiDAR for Plot-Level Canopy Height Model (CHM) Generation

Objective: To generate a high-resolution Canopy Height Model for an experimental crop plot to assess canopy height uniformity and identify stress zones.

Materials:

- UAV-integrated LiDAR system (e.g., YellowScan Mapper, Routescene LidarPod)

- Ground Control Points (GCPs - 5-10 minimum)

- RTK/PPK-enabled GPS for GCP survey

- Flight planning software (e.g., UgCS, DJI Pilot)

- Processing suite (e.g., Lidar360, LASTools, GreenValley LiDARForest)

Procedure:

Pre-flight Survey:

- Lay out 5-10 high-contrast GCPs (e.g., white panels) evenly across the plot perimeter and center.

- Use the survey-grade GPS to record the precise latitude, longitude, and elevation of each GCP center.

Flight Planning:

- In flight planning software, define the plot polygon.

- Set flight parameters: Altitude (50-75m AGL), speed (3-5 m/s), sidelap (60-70%), scan frequency (maximize based on system).

- Ensure the planned flight lines are perpendicular to the predominant row direction (if any).

Data Acquisition:

- Perform system pre-flight checks (battery, GPS lock, sensor heating).

- Execute the autonomous flight plan.

- Record flight log.

Data Processing (Point Cloud & CHM Generation):

- Trajectory Processing: Use the vendor software to integrate raw LiDAR data with UAV IMU/GNSS data to produce a georeferenced point cloud (.las or .laz format).

- Classification: Apply an algorithm (e.g., CSF filter) to classify points into "ground" and "non-ground" (vegetation) classes.

- Ground Model: Interpolate the "ground" points to create a Digital Terrain Model (DTM) using Triangular Irregular Network (TIN) or raster interpolation.

- Surface Model: Interpolate all first-return points (or "non-ground") to create a Digital Surface Model (DSM).

- CHM Calculation: Subtract the DTM from the DSM (CHM = DSM - DTM) using a raster calculator to create the height-normalized model.

Analysis:

- Calculate plot-level statistics from the CHM raster: mean height, max height, height variance.

- Generate a height histogram to assess canopy uniformity.

- Visually inspect the CHM for areas of lower height, potentially indicating water/nutrient stress or disease.

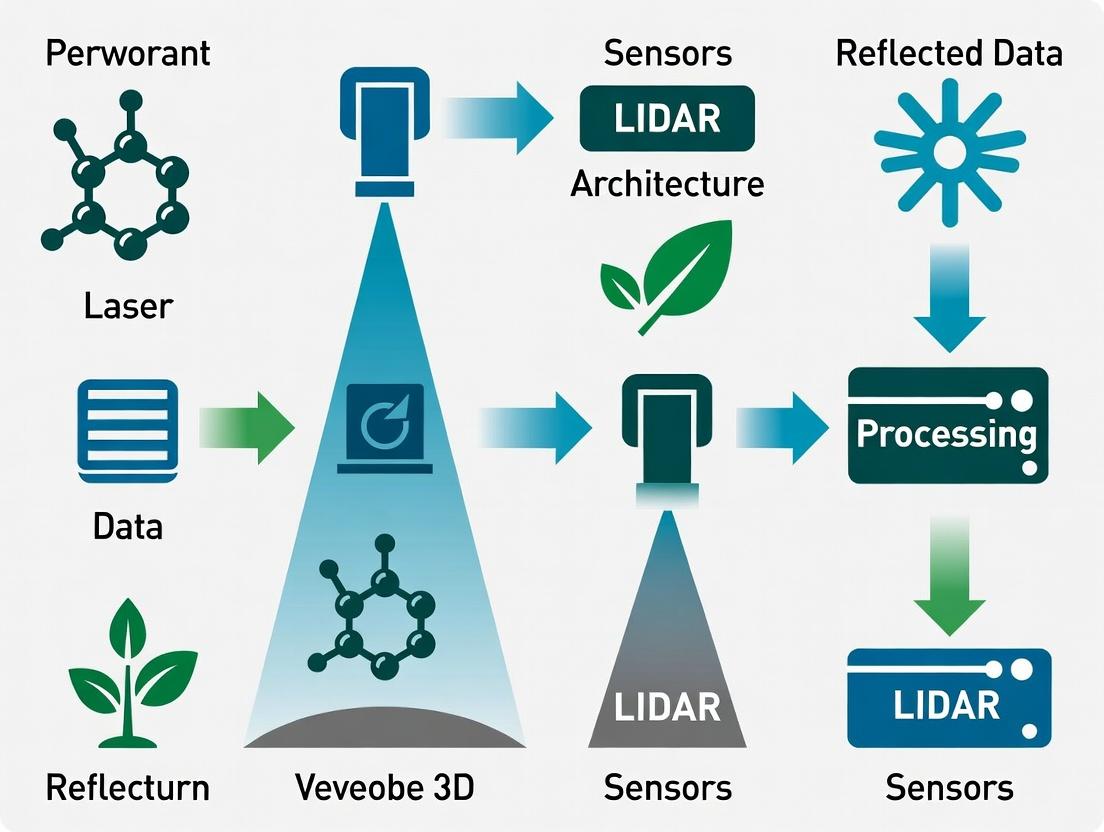

Diagrams

LiDAR Sensing: From Pulse to Point Workflow

3D Plant Phenotyping LiDAR Workflow

The Scientist's Toolkit

Table 3: Essential Research Reagents & Solutions for LiDAR Plant Research

| Item | Function/Application | Example Product/Note |

|---|---|---|

| Terrestrial Laser Scanner (TLS) | High-resolution, ground-based 3D data capture of plant structure. | Faro Focus S, Leica BLK360, RIEGL VZ-400. |

| UAV LiDAR Payload | Aerial capture of canopy structure and plot/field scale topography. | Routescene LidarPod, YellowScan Mapper, Geodata Wizard. |

| Calibration Spheres/Targets | Used for precise co-registration of multiple TLS scans. | High-contrast (black/white) spheres or checkerboard targets. |

| Ground Control Points (GCPs) | Provide absolute georeferencing and accuracy assessment for UAV data. | Survey panels (e.g., AeroPoints) or marked permanent markers. |

| RTK/PPK GPS System | Survey-grade positioning for mapping GCPs and/or enhancing UAV trajectory accuracy. | Trimble R系列, Emlid Reach RS2+. |

| Point Cloud Processing Software | For registration, filtering, classification, and analysis of .las/.laz files. | CloudCompare (open-source), Lidar360, LASTools, 3D Forest. |

| Canopy Analysis Software | Extracts specific plant architectural traits from point clouds. | Computree (open-source), TreeQSM, SimpleTree. |

| Spectral Reflectance Targets | For radiometric calibration when using intensity values from multispectral LiDAR. | Spectralon panels of known reflectance. |

| Voxel-Based Analysis Scripts | Custom (e.g., Python/R) scripts to calculate Leaf Area Density (LAD) from voxelized point clouds. | Uses libraries like lidR (R) or laspy/Pandas (Python). |

Why LiDAR for Plants? Key Advantages Over Traditional 2D Imaging.

Application Notes

LiDAR (Light Detection and Ranging) has emerged as a transformative tool for quantifying 3D plant architecture, directly addressing limitations inherent to traditional 2D imaging (e.g., RGB photography). Within thesis research on 3D phenotyping, LiDAR's core advantage is its capacity to capture precise, volumetric structural data non-destructively and without the confounding effects of ambient lighting.

Key Quantitative Advantages

The fundamental metrics provided by LiDAR enable researchers to move beyond proxy measurements to direct architectural quantification.

Table 1: Comparison of LiDAR and 2D Imaging for Plant Phenotyping

| Phenotypic Trait | LiDAR 3D Measurement | Traditional 2D Imaging Measurement | LiDAR Advantage |

|---|---|---|---|

| Biomass (Volume) | Direct voxel-based or convex hull volume estimation (cm³). | Estimated from projected area, requiring species-specific allometric models. | Direct, non-destructive volumetric assessment without modeling assumptions. |

| Canopy Height | Direct Z-axis measurement from point cloud (mm accuracy). | Requires scale reference in image; sensitive to camera angle. | High vertical precision and accuracy, independent of view angle. |

| Leaf Area Index (LAI) | Calculated from 3D point density and gap fraction theory. | Estimated from hemispherical photography or light transmittance. | Spatially explicit LAI, can be calculated for canopy sub-volumes. |

| Canopy Coverage | 3D canopy volume or envelope (m³). | 2D projected area (m²). | Captures canopy density and porosity in three dimensions. |

| Stem Diameter | Direct cylinder fitting to point cloud of stem (mm accuracy). | Caliper measurement or 2D image analysis with scale. | Non-contact, high-throughput measurement possible. |

| Light Interception | Calculated via 3D radiative transfer models using explicit architecture. | Estimated from 2D canopy cover or transmitted light sensors. | Mechanistic modeling based on actual 3D structure. |

Table 2: Typical LiDAR Sensor Specifications for Plant Research

| Parameter | Terrestrial Laser Scanner (TLS) | Mobile/Handheld LiDAR | LiDAR on UAV/Drone |

|---|---|---|---|

| Range | 1-100 m (high accuracy at close range) | 0.5-50 m | 5-200 m |

| Accuracy | Sub-mm to 2 mm | 1-10 mm | 10-30 mm |

| Scan Speed | 0.5-2 million points/sec | 300,000-2 million points/sec | 100,000-500,000 points/sec |

| Field of View | 360° horizontal, 300° vertical | 360° horizontal, 270° vertical | 70-90° (nadir or oblique) |

| Key Use Case | Detailed single plant or small plot architecture. | In-field, under-canopy mobile mapping. | High-throughput field-scale canopy assessment. |

| Point Density | Very High (1000s pts/cm²) | High (100s pts/cm²) | Moderate (10s pts/cm²) |

Experimental Protocols

Protocol 1: High-Resolution 3D Architecture Capture of Individual Plants Using Terrestrial LiDAR

Objective: To acquire a dense, complete 3D point cloud of a single plant for architectural trait extraction (e.g., leaf angle distribution, branch topology, stem diameter).

Materials: See "The Scientist's Toolkit" below.

Methodology:

- Site Preparation: Place the target plant on a rotating platform in a controlled environment (growth chamber or lab) or in a field setting with minimal wind.

- Scanner Setup: Position the TLS (e.g., Faro Focus) on a stable tripod at a distance capturing the entire plant within the scanner's optimal range (e.g., 1-3 m).

- Scan Registration Targets: Place spherical or checkerboard targets around the plant. These will be used to align multiple scans.

- Multi-Scan Acquisition: a. Perform the first scan from the primary position. b. Rotate the platform approximately 120 degrees. Perform a second scan. c. Rotate another 120 degrees. Perform a third scan. d. Optionally, perform a top-down scan if the scanner allows for vertical tilt.

- Point Cloud Processing: a. Registration: Use the scanner's software (e.g., Faro SCENE) to align all scans into a single coordinate system using the identified targets. b. Noise Filtering: Apply statistical outlier removal or radius-based filters to eliminate spurious points (e.g., dust, flying insects). c. Segmentation: Isolate the plant point cloud from the background (platform, soil) using clustering algorithms (e.g., Euclidean clustering) or manual cropping. d. Export: Export the cleaned, registered point cloud in a standard format (e.g., .las, .ply, .xyz).

Protocol 2: Field-Based Canopy Phenotyping Using UAV LiDAR

Objective: To measure plot-level canopy height, volume, and LAI for high-throughput genetic or treatment screening.

Methodology:

- Flight Planning: Use UAV flight planning software (e.g., UgCS, DJI Terra). Define a lawnmower-pattern flight path over the experimental plots with >75% side overlap.

- System Check: Ensure UAV LiDAR system (e.g., Routescene LidarPod) is calibrated (boresight, IMU). Check GPS base station connectivity for Real-Time Kinematic (RTK) positioning.

- Ground Control: Place ground control points (GCPs) with known coordinates around the field perimeter.

- Data Acquisition: Execute the autonomous flight at a constant altitude (e.g., 20-30 m AGL) and speed (e.g., 3-5 m/s) under stable lighting (avoiding solar noon).

- Data Processing Workflow: a. Trajectory Computation: Process the raw GNSS/IMU data to derive a precise sensor trajectory. b. Point Cloud Generation: Fuse trajectory data with laser ranges to generate a georeferenced point cloud. c. Normalization: Classify ground points using an algorithm (e.g., Cloth Simulation Filter). Subtract ground elevation (Digital Terrain Model) from canopy points to create a Canopy Height Model (CHM). d. Plot Segmentation: Use plot boundary shapefiles to clip point clouds for individual experimental units. e. Metric Extraction: Calculate metrics per plot: 95th percentile of height, canopy cover above a threshold, rumple index (canopy roughness).

UAV-LiDAR Field Phenotyping Workflow

The Scientist's Toolkit

Table 3: Key Research Reagent Solutions & Materials for LiDAR Plant Phenotyping

| Item / Solution | Function / Purpose |

|---|---|

| Terrestrial Laser Scanner (TLS) | High-accuracy, static scanner for detailed 3D models of individual plants or small plots. |

| UAV-borne LiDAR System | Integrated sensor package (LiDAR, GNSS, IMU) for rapid, high-throughput aerial scanning of field canopies. |

| Mobile Mapping System (e.g., SLAM LiDAR) | Handheld or cart-based system for capturing under-canopy and plot-level data in dense plantings. |

| Registration Targets (Spheres/Checkerboards) | Provide reference points for accurately aligning multiple scans into a unified point cloud. |

| RTK GNSS Base Station | Provides centimeter-accuracy positioning corrections for georeferencing UAV or mobile LiDAR data. |

| Point Cloud Processing Software (e.g., CloudCompare, Lidar360) | Open-source or commercial software for visualization, filtering, segmentation, and analysis of 3D point data. |

| 3D Plant Reconstruction Software (e.g., PlantNet, TreeQSM) | Specialized algorithms to convert point clouds into quantitative structural models (leaf areas, stem volumes). |

| Calibrated Rotating Platform | Allows for full 360-degree capture of a plant with minimal occlusions in controlled environments. |

Within the broader thesis on LiDAR for 3D plant architecture measurement, this document details its transformative application notes and protocols. LiDAR transcends traditional 2D imaging by capturing precise, volumetric structural data, enabling quantitative analysis of plant growth, development, and stress responses at scale. These protocols are foundational for linking 3D phenotype to genotype and physiological state.

Application Note 1: High-Throughput Phenotyping (HTP) for Genetic Screening

Objective: To non-destructively quantify architectural traits of a plant population for genome-wide association studies (GWAS) or QTL mapping.

Key Measurable Traits (Quantitative Data): Table 1: Core 3D Architectural Traits Extractable from LiDAR Point Clouds

| Trait Category | Specific Metric | Description | Typical Range/Units |

|---|---|---|---|

| Canopy Structure | Canopy Height | Maximum height from base | 0.2 - 2.0 m |

| Canopy Volume | 3D convex hull or voxel-based volume | 0.1 - 50.0 L | |

| Canopy Projected Area | Area from top-down view | 0.01 - 1.0 m² | |

| Complex Architecture | Plant Surface Area | Total leaf & stem area | 0.05 - 5.0 m² |

| Leaf Area Index (LAI) | Plant surface area per unit ground area | 0.1 - 6.0 (ratio) | |

| Leaf Angle Distribution | Mean leaf inclination angle | 0 - 90 degrees |

Experimental Protocol:

- System Setup: Install a static terrestrial LiDAR scanner (e.g., FARO Focus) or a mobile gantry-mounted system over a field or growth chamber. Ensure consistent ambient lighting.

- Plant Material: Grow a diversity panel of genotypes (e.g., 500 Arabidopsis accessions or 200 maize lines) under controlled conditions.

- Scanning: Position scanner at multiple stations around the plot. For each station, capture a 360° high-resolution scan. Register multiple scans using fixed target spheres.

- Data Processing: Merge point clouds. Apply noise filtering (Statistical Outlier Removal). Segment individual plants using a clustering algorithm (e.g., Euclidean clustering) if grown in proximity.

- Trait Extraction: Use custom scripts (e.g., in Python with Open3D) or software (e.g., PlantNet) to calculate metrics in Table 1 from the segmented 3D point cloud.

- Statistical Integration: Correlate extracted 3D traits with genotypic data for association analysis.

Diagram 1: High-Throughput Phenotyping Workflow (70 chars)

Application Note 2: Monitoring Abiotic Stress Response Dynamics

Objective: To temporally track and quantify changes in plant architecture in response to drought, salinity, or nutrient deficiency.

Key Response Metrics (Quantitative Data): Table 2: LiDAR-Derived Metrics for Stress Response Monitoring

| Stress Type | Early Response Metric | Late Response Metric | Measurement Frequency |

|---|---|---|---|

| Drought | Leaf Wilting (Surface Area Change) | Canopy Volume Reduction | Daily |

| Leaf Angle Increase (Paraheliotropism) | Stem Height Growth Cessation | ||

| Salinity | New Leaf Emergence Rate | Total Canopy Dieback Volume | Every 3 Days |

| Nutrient Deficiency | Leaf Size Asymmetry | Inter-node Length Reduction | Weekly |

| General | Biomass Estimate (from volume) | Architectural Complexity Index | As per protocol |

Experimental Protocol:

- Treatment & Control: Establish matched groups (n≥10 plants). Apply stressor (e.g., withhold water) to treatment group.

- Temporal Scanning: Use a fixed LiDAR scanner setup to scan all plants at defined intervals (see Table 2). Precisely maintain plant and scanner positions.

- Change Detection: Align sequential point clouds of the same plant using the Iterative Closest Point (ICP) algorithm.

- Differential Analysis: For each time point, subtract the control group's mean trait value from the stressed group. Calculate percent change for metrics in Table 2.

- Pathway Correlation: Correlate significant architectural changes with molecular data (e.g., transcriptomics of stress pathways).

Diagram 2: Stress Response to 3D Phenotype Pathway (55 chars)

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for LiDAR-Based Plant Architecture Research

| Item / Solution | Function / Role in Experiment |

|---|---|

| Terrestrial LiDAR Scanner (TLS) | Core sensor for capturing high-resolution 3D point clouds. Key specs: wavelength (905nm vs 1550nm), range, accuracy. |

| Mobile Robotic Platform (AGV/Drone) | Enables automated, high-throughput scanning of large field plots. |

| Registration Targets (Spheres/Checkerboards) | Used to align and merge multiple LiDAR scans into a unified coordinate system. |

| 3D Point Cloud Processing Software (e.g., CloudCompare, Open3D) | For visualization, filtering, segmentation, and geometric analysis of raw LiDAR data. |

| Plant-Specific Segmentation Algorithm | Custom code (e.g., using deep learning like PointNet++) to separate plant from background and individual organs. |

| Controlled Growth Environment | Growth chambers or phenotyping facilities with reproducible light, temperature, and humidity for standardized scans. |

| Geometric Calibration Kit | Objects of known dimension (e.g., calibration sphere) to validate LiDAR measurement accuracy. |

| Data Management Pipeline | Structured database (e.g., based on MIAPPE standards) to link 3D point clouds with metadata and genomic info. |

This document serves as an application note for a thesis focused on employing Light Detection and Ranging (LiDAR) for high-resolution 3D plant architecture phenotyping. Accurate measurement of structural traits—such as canopy height, volume, leaf area index (LAI), and stem diameter—is critical for research in plant biology, breeding, and pharmaceutical compound development from botanical sources. Selecting the appropriate LiDAR platform is paramount for data quality and experimental success. This note compares terrestrial (TLS), unmanned aerial vehicle-mounted (UAV-LiDAR), and lab-based (e.g., scanning gantry) systems, providing protocols for their application in controlled and field environments.

Hardware Comparison: Technical Specifications and Applications

Table 1: Core Specifications and Application Suitability of LiDAR Platforms for Plant Phenotyping

| Feature | Terrestrial Laser Scanner (TLS) | UAV-Mounted LiDAR | Lab-Based/Gantry System |

|---|---|---|---|

| Typical Range | 1-300 m | 50-250 m (AGL) | 0.1-10 m |

| Scanning Mechanism | Static, tripod-mounted; panoramic or dual-axis scan | Dynamic, moving platform; push-broom or rotating sensor | Precise linear or rotary stages in controlled lab |

| Accuracy (Relative) | Very High (mm-cm level) | Moderate-High (cm level) | Extremely High (sub-mm to mm level) |

| Point Density | Very High (1000s-10,000s pts/m² at close range) | Moderate (100s-1000s pts/m²) | Extremely High (10,000s+ pts/m²) |

| Coverage Area | Single plot to small field (requires multiple scans) | Large field scale (hectares per flight) | Single plant or small pot |

| Key Advantage | High-fidelity 3D reconstruction of under-canopy and stem architecture. | Efficient coverage of canopy-level traits at field scale. | Ultra-high resolution for detailed organ-level geometry (leaves, stems). |

| Primary Limitation | Time-intensive setup/registration; occlusion in dense canopies. | Limited penetration to lower canopy; payload/ flight time constraints. | Artificial growth environment; limited to potted plants. |

| Ideal Research Use | Allometric modeling, biomass estimation, detailed architecture. | Canopy height modeling, lodging assessment, field-scale LAI. | Leaf inclination, phyllotaxy, detailed morphological genetics. |

| Example Systems | RIEGL VZ-400, FARO Focus, Leica BLK360. | RIEGL miniVUX, Velodyne Puck series, Livox Mid-70. | Custom gantry systems, high-precision arm scanners (e.g., Creaform). |

Table 2: Quantitative Data Output Comparison for a Representative Maize Plant

| Measured Parameter | TLS | UAV-LiDAR | Lab-Based System |

|---|---|---|---|

| Point Cloud Density (pts/cm²) | 50-200 | 5-20 | 500-2000 |

| Stem Diameter Error | ±1.5 mm | ±15 mm (if detected) | ±0.2 mm |

| Canopy Height Estimate Error | ±2 cm | ±5 cm | ±1 mm (lab context) |

| Data Acquisition Time | 30 mins (multi-scan) | 5 mins (flight time) | 10-60 mins (scan time) |

| Post-Processing Complexity | High (registration, filtering) | Moderate (trajectory correction, noise removal) | Low to Moderate (noise removal) |

Experimental Protocols

Protocol 1: Multi-Scan TLS for Whole-Plant Architecture

Objective: To generate a complete, occlusion-minimized 3D point cloud of a tree or large row-crop plant for structural parameter extraction. Materials: TLS (e.g., RIEGL VZ-400), tripod, calibration targets (spheres/checkboards), laptop with registration software (e.g., RiSCAN PRO, CloudCompare). Procedure:

- Site Reconnaissance: Identify scanning locations around the target plant to ensure coverage from all sides and under the canopy.

- Target Placement: Place 4-6 high-contrast calibration targets in stable positions visible from multiple scan stations.

- Scanning:

- Set up TLS on tripod at the first station.

- Configure scan resolution (e.g., 0.05° angular step) and quality settings.

- Execute scan. Record station ID.

- Station Repetition: Move TLS to subsequent stations (typically 4-8 stations). Ensure ≥3 targets are visible from each new station to the previous one.

- Data Registration:

- Import all scans into registration software.

- Use target-based or cloud-to-cloud (ICP) algorithms to co-register all scans into a unified coordinate system.

- Verify registration error (< 5 mm RMS is ideal).

- Data Cleaning: Manually remove gross outliers (e.g., distant objects) and apply statistical outlier removal filters to reduce noise.

- Export: Export the registered, clean point cloud in a standard format (e.g., .las, .ply).

Protocol 2: UAV-LiDAR for Canopy Height Model (CHM) Generation

Objective: To rapidly assess canopy height and variability across a field plot. Materials: UAV platform (multi-rotor or fixed-wing), UAV-LiDAR payload with IMU/GNSS, ground control points (GCPs), base station, processing software (e.g., LiDAR360, GreenValley). Procedure:

- Mission Planning:

- Define flight area in mission planning software.

- Set flight altitude (e.g., 50m AGL) and speed to achieve desired point density and overlap (≥50% sidelap).

- Ensure IMU/GNSS system is properly initialized.

- Ground Control: Survey in 4-5 GCPs (checkerboards) across the site using RTK-GNSS for high-accuracy positioning.

- Pre-Flight Check: Verify battery levels, sensor operation, and storage capacity. Perform IMU calibration if required.

- Data Acquisition: Execute autonomous flight. Monitor real-time telemetry.

- Post-Processing:

- Trajectory Processing: Process raw GNSS/IMU data using the base station log to derive a precise sensor trajectory (POS file).

- Point Cloud Generation: Fuse trajectory data with LiDAR ranges to generate georeferenced point cloud.

- Classification: Use algorithms to classify ground points (e.g., cloth simulation filter).

- DEM/DSM Creation: Interpolate ground points into a Digital Elevation Model (DEM) and all first-return points into a Digital Surface Model (DSM).

- CHM Calculation: Generate Canopy Height Model by subtracting DEM from DSM (CHM = DSM - DEM).

Protocol 3: Lab-Based LiDAR for Leaf-Level Phenotyping

Objective: To acquire a millimeter-resolution point cloud of an excised leaf or small plant for surface morphology and area analysis. Materials: High-precision laser scanner mounted on a robotic gantry or articulated arm, controlled rotation stage, dark enclosure, calibration panel, PC with control software. Procedure:

- System Calibration: Perform manufacturer-specified intrinsic (scanner) and extrinsic (gantry/arm) calibration using provided calibration artifacts.

- Sample Mounting: Securely mount the plant pot or excised leaf on the rotation stage. For leaves, use non-reflective, low-profile mounts.

- Scan Planning:

- Define scanner path or set of viewpoints to cover all sample surfaces.

- Set laser power and exposure to avoid saturation while ensuring good return signal.

- Data Acquisition:

- Initiate automated scan sequence. The system will move the scanner and/or rotate the stage to capture data from all angles.

- Monitor for occlusions or scanner errors.

- Point Cloud Registration: Automatic registration is typically performed in real-time by the system software using encoder data or targetless features.

- Mesh Reconstruction & Analysis: Use software (e.g., MeshLab, PlantIT) to create a watertight 3D mesh from the point cloud. Calculate parameters like surface area, volume, and curvature.

Visualization of Workflow Selection

Diagram Title: LiDAR Platform Selection Workflow for Plant Phenotyping

The Scientist's Toolkit: Key Reagents & Materials

Table 3: Essential Research Reagent Solutions for LiDAR Plant Phenotyping

| Item | Function & Brief Explanation |

|---|---|

| Calibration Spheres/Targets | High-contrast, geometrically known objects (e.g., spheres, checkerboards) placed in a scan scene to provide reference points for accurate co-registration of multiple TLS scans. |

| Ground Control Points (GCPs) | Physically marked points (e.g., checkerboard panels) with precisely surveyed coordinates (via RTK-GNSS). Critical for georeferencing and accuracy assessment of UAV-LiDAR data. |

| Spectralon Diffuse Reflectance Panel | A lab-grade, near-Lambertian surface with known reflectance properties. Used for radiometric calibration of intensity values in lab-based or TLS systems, enabling material comparison. |

| Anti-Reflective Spray (e.g., Magnesium Oxide) | A temporary, matte-white coating applied to shiny or waxy leaves to reduce specular reflection and laser signal saturation, ensuring accurate 3D point capture. |

| Point Cloud Processing Software (e.g., CloudCompare, LiDAR360) | Essential software suites for filtering, classifying, segmenting, and extracting quantitative metrics (volume, height, density) from raw LiDAR point clouds. |

| Georeferencing Software with IMU/GNSS Processing (e.g., Inertial Explorer) | Specialized software to post-process raw inertial measurement unit (IMU) and global navigation satellite system (GNSS) data from UAVs, producing the precise trajectory needed for point cloud generation. |

| High-Precision Rotation Stage & Mounts | Used in lab-based systems to rotate the sample precisely, allowing for multi-view scanning and complete coverage without occlusion. Non-reflective mounts prevent data artifacts. |

Within the broader thesis on LiDAR for 3D plant architecture measurement, precise quantification of structural traits is fundamental. Traditional methods for measuring traits like height, biomass, and Leaf Area Index (LAI) are often destructive, labor-intensive, and spatially limited. LiDAR (Light Detection and Ranging) emerges as a core remote sensing technology capable of capturing precise, three-dimensional point clouds of plant structures. This application note defines key plant architecture traits, details what LiDAR systems actually measure to derive them, and provides standardized protocols for data acquisition and analysis, targeting the needs of research scientists in agronomy, ecology, and drug development (e.g., for botanical pharmaceuticals).

Defined Traits and LiDAR Measurement Principles

LiDAR sensors measure distance by calculating the time-of-flight of emitted laser pulses. The returned points generate a 3D "cloud" representing physical surfaces. The following core traits are derived computationally from this primary data.

Table 1: Plant Architecture Traits and LiDAR Measurement Basis

| Trait | Definition | What LiDAR Directly Measures | Common Derivation Method |

|---|---|---|---|

| Canopy Height | Vertical distance from ground to top of canopy. | Range distance for highest non-noise returns per unit area. | CHM = DSM (Digital Surface Model) - DTM (Digital Terrain Model). |

| Canopy Height Model (CHM) | Raster map of canopy height above ground. | 3D point cloud with classified ground vs. vegetation points. | Interpolation of normalized points (height above ground) into a raster. |

| Leaf Area Index (LAI) | One-sided leaf area per unit ground area (m²/m²). | Gap fraction within the canopy via light penetration metrics. | Calculation from LiDAR gap probability using Beer-Lambert law models. |

| Plant & Canopy Volume | Total 3D space occupied by plant biomass. | Dense point cloud delineating the outer envelope of the plant. | Voxelization (3D pixel count) or convex/alpha hull algorithms. |

| Above-Ground Biomass (AGB) | Dry mass of living plant material per unit area. | Canopy volume, height, and structural density proxies. | Allometric equations using LiDAR-derived metrics (e.g., Volume × Density). |

| Canopy Cover & Gap Fraction | Percentage of ground covered by vertical canopy projection; proportion of sky visible through canopy. | Binary classification of ground hits vs. vegetation-obstructed hits. | Ratio of ground hits in full dataset to total potential ground hits. |

Experimental Protocols for Trait Extraction

Protocol 1: Terrestrial Laser Scanning (TLS) for Single-Plant Architecture

Objective: To generate high-resolution 3D models of individual plants for volume, LAI, and branch architecture analysis. Materials: See The Scientist's Toolkit. Procedure:

- Site Setup: Place scan targets (retroreflective spheres or checkerboards) around the plant for multi-scan registration.

- Scanner Registration: Position the TLS on a stable tripod. Perform a test scan to ensure the entire plant is within the field of view.

- Multi-Scan Acquisition: Conduct scans from a minimum of 3 positions (typically 120° apart) to occlude gaps. Ensure ≥30% overlap between scans using targets.

- Data Processing:

- Registration: Use scanner software to align all scans into a single coordinate system via target matching.

- Noise Filtering: Apply statistical outlier removal to eliminate spurious points.

- Classification: Use a height-based or clustering algorithm to separate plant points from ground and background.

- Trait Extraction:

- Volume: Apply a convex hull or voxel-based occupancy algorithm to the classified plant point cloud.

- LAI: Use a voxel-based approach to model leaf area density within defined layers.

Protocol 2: UAV LiDAR for Plot-Level Canopy Metrics

Objective: To measure canopy height, biomass, and LAI at the plot or small field scale. Materials: See The Scientist's Toolkit. Procedure:

- Flight Planning: Design a parallel line flight pattern with ≥50% side overlap and ≥70% forward overlap at the recommended altitude for the sensor's beam divergence.

- Ground Control: Survey in permanent Ground Control Points (GCPs) with RTK/GNSS for georeferencing.

- Data Acquisition: Fly mission under stable, low-wind conditions. Ensure the scanner's pulse repetition rate and scan frequency are set to achieve a target point density of ≥100 pts/m².

- Data Processing:

- Trajectory Processing: Process IMU and GNSS data to derive precise sensor orientation.

- Point Cloud Generation: Fuse trajectory with range data to generate georeferenced point cloud in LAS/LAZ format.

- Ground Classification: Classify ground points using an algorithm (e.g., Progressive Morphological Filter).

- Normalization: Calculate height above ground for all non-ground points.

- Rasterization: Generate a 10cm resolution Canopy Height Model (CHM) from normalized maximum heights.

- Trait Extraction:

- Height Percentiles: Calculate (e.g., 95th, 75th, 50th) from normalized point cloud within plot boundaries.

- Canopy Cover: Calculate as (1 - [Count of ground-classified returns / Total returns]) within a plot.

Visualization of Workflows

Title: LiDAR Trait Extraction Workflow from Acquisition to Results

Title: From Point Cloud to Canopy Height Model (CHM)

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for LiDAR Plant Architecture Studies

| Item / Solution | Function / Purpose |

|---|---|

| Terrestrial Laser Scanner (e.g., FARO Focus, RIEGL VZ-400) | High-precision, static ground-based system for mm-resolution 3D models of individual plants and plots. |

| UAV-borne LiDAR Sensor (e.g., Routescene LidarPod, YellowScan Mapper) | Airborne system for efficient, plot-to-field scale 3D data capture, measuring canopy top and sub-canopy structure. |

| Retroreflective Scan Targets | Used as stable, high-visibility reference points for accurate co-registration of multiple TLS scans. |

| RTK/GNSS Survey System (e.g., Trimble R12, Emlid Reach RS3) | Provides centimeter-accuracy geolocation for Ground Control Points (GCPs) and direct georeferencing of UAV platforms. |

| Point Cloud Processing Software (e.g., CloudCompare, Lidar360, LASTools) | Open-source and commercial software suites for visualization, classification, filtering, and metric extraction from point clouds. |

| Voxel-Based Analysis Code (e.g., Computree, DART model) | Specialized computational tools for discretizing point clouds into 3D voxels to estimate leaf area density and LAI profiles. |

| Allometric Equation Database | Plant species-specific equations that convert LiDAR-derived structural metrics (height, volume) into biomass estimates. |

Implementing a LiDAR Phenotyping Pipeline: From Data Capture to 3D Model Extraction

Application Notes

High-fidelity 3D plant architecture measurement using LiDAR is critical for phenotyping, growth modeling, and quantifying biotic/abiotic stress responses. The accuracy of these measurements is fundamentally dependent on the scanning environment, which must be optimized to minimize noise and maximize signal from the target phenotype. This document outlines best practices for field and controlled environment setups, framed within a thesis on advancing LiDAR for 3D plant architecture research.

Environmental Parameter Optimization

The following parameters directly influence LiDAR point cloud quality and must be documented and controlled.

Table 1: Critical Environmental Parameters & Target Ranges

| Parameter | Controlled Environment Target | Field Notes | Impact on LiDAR Data |

|---|---|---|---|

| Lighting | Consistent, diffuse artificial light (150-250 µmol m⁻² s⁻¹ PAR). | Scan at dawn/dusk or under uniform overcast. Avoid direct sun. | Direct sunlight causes sensor saturation & hard shadows. Uneven light alters reflectance. |

| Ambient Motion | Zero air movement (fans off). | Scan during low wind periods (< 1 m/s). Use windbreaks if possible. | Wind-induced plant movement causes motion blur & point cloud distortion. |

| Platform Stability | Vibration-damped scanning platform. | Use tripods with weighted centers. Isolate from ground vibration. | Micro-vibrations lead to point jitter and registration errors. |

| Background | Non-reflective, matte black backdrop (NIR reflectance <5%). | Use portable black screens. Maximize distance to non-target vegetation. | High-contrast backdrop simplifies segmentation. Reduces multipath & stray light errors. |

| Target Reflectivity | Use calibration panels (Spectralon) of known reflectance (e.g., 20%, 50%). | Attach fiducial markers (retro-reflective tape) to non-plant structures. | Enables radiometric correction and point cloud normalization across scans. |

| Spatial Referencing | Fixed, surveyed ground control points (GCPs) with known coordinates. | Use permanent GNSS-referenced targets or fixed stakes with targets. | Enables precise multi-temporal scan alignment and georeferencing. |

System Calibration & Validation Protocol

Objective: To ensure metric accuracy and repeatability of LiDAR-derived structural traits.

Protocol 2.1: Pre-Deployment System Calibration

- Range & Intensity Calibration: Scan a flat panel at known distances (e.g., 1m, 2m, 5m) under controlled light. Record raw intensity values. Use manufacturer software to generate distance-correction and intensity normalization functions.

- Angular Accuracy Check: Scan a precise grid pattern of high-contrast targets. Compare derived angular separations between target centroids to known values. Deviation should be < sensor's specified angular accuracy.

- Multi-Scanner Registration (if applicable): For multi-view systems, scan a sphere or checkerboard target visible to all scanners. Use target centroids to compute the rigid transformation matrix between scanner coordinate systems.

Protocol 2.2: In-Situ Validation Using Dimensional Standards

- Materials: Set of certified gauge blocks or 3D-printed objects of known dimensions (e.g., a 300mm tetrahedron).

- Procedure: Place dimensional standards within the scan volume, adjacent to but not occluding the plant target.

- Scan & Analyze: Perform a standard scan. In the resulting point cloud, manually or algorithmically measure the distances between defined vertices on the standard object.

- Validation Metric: Calculate Root Mean Square Error (RMSE) of measured vs. known dimensions. Acceptance Criterion: RMSE ≤ 2x the sensor's stated single-point precision. Document this value for each scan session.

Table 2: Example Validation Results for a Terrestrial Laser Scanner

| Standard Object | Known Length (mm) | Measured Length (mm) | Absolute Error (mm) | Session RMSE (mm) |

|---|---|---|---|---|

| Gauge Block A | 100.00 | 100.23 | 0.23 | 0.31 |

| Gauge Block B | 200.00 | 199.62 | 0.38 | |

| Tetrahedron Edge | 300.00 | 299.75 | 0.25 |

Experimental Protocols

Protocol A: Controlled Growth Chamber Plant Scanning

Objective: To acquire temporally sequential 3D point clouds of a single plant with high precision for architectural trait extraction.

Workflow Diagram Title: Controlled Chamber LiDAR Scanning Workflow

Detailed Methodology:

- Pre-Scan Preparation: Water plants at least 2 hours prior to scanning to allow for potential guttation to cease. Ensure all chamber environmental controls (light, humidity, temperature) are at setpoints and have been stable for >30 minutes.

- Sensor Positioning: Mount the LiDAR sensor on a stable tripod or gantry. The distance to the plant should be optimized for the desired point density (e.g., ≥10 pts/cm² on leaf surface). Ensure the laser plane/beam does not intersect watering systems or chamber walls.

- Background Setup: Deploy a non-reflective black curtain behind and to the sides of the plant to create a high-contrast backdrop.

- Fiducial Marker Placement: Affix at least three retro-reflective fiducial markers on the pot or growth platform (not on the plant). These will serve as stable reference points for multi-temporal registration.

- Execute Scanning Workflow: Follow the workflow detailed in the diagram above (Protocol A Diagram). For multi-view scanning, rotate the plant platform or move the sensor to achieve >60% overlap between scans.

- Data Processing: Register multi-view scans using fiducial markers or iterative closest point (ICP) algorithm. Apply noise filter (e.g., Statistical Outlier Removal). Segment the plant point cloud from the pot and background using color or reflectance difference.

Protocol B: Field-Based Phenotyping Plot Scanning

Objective: To obtain georeferenced 3D point clouds of multiple plants within a plot for canopy architecture and biomass estimation.

Workflow Diagram Title: Field Plot LiDAR Scanning Protocol

Detailed Methodology:

- Ground Control Point (GCP) Establishment: Prior to scanning, install a minimum of four permanent, stable targets (e.g., checkerboards on stakes) around the plot perimeter. Survey their precise 3D coordinates using a GNSS receiver with Real-Time Kinematic (RTK) correction.

- Weather Window Selection: Scan during periods of minimal wind (<1.5 m/s) and under uniform cloud cover (diffuse light). Early morning is often optimal.

- Scanner Network Design: Plan scanning stations to ensure every part of the plot is covered by at least two scans from different angles. Stations should be placed on stable ground.

- Scan Execution: At each station, level the scanner, perform a system check, and acquire a scan ensuring all GCPs and the target plot are clearly visible. Record station log.

- Data Processing: Register all individual scans into a unified coordinate system using the surveyed GCP coordinates. Apply ground-filtering algorithms (e.g., Cloth Simulation Function) to separate canopy from ground points. Segment individual plants using canopy height model-based watershed segmentation or deep learning approaches.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for LiDAR Plant Scanning

| Item | Function & Specification | Application Context |

|---|---|---|

| Terrestrial Laser Scanner (TLS) | High-accuracy (≥1-2mm at 10m), waveform or high-multi-echo capable. E.g., FARO Focus, Leica RTC360. | Field & Controlled. Primary data acquisition. Waveform analysis aids in penetrating dense canopies. |

| Mobile Handheld Scanner | Structured light or LiDAR-based. E.g., iPhone LiDAR, GeoSLAM ZEB Horizon. | Controlled & Small Plots. For rapid, close-range scanning of individual plants or small canopies. |

| Spectralon Calibration Panels | Diffuse reflectance standard panels (e.g., 20%, 50%, 99% reflectance). | Controlled. For radiometric calibration of LiDAR intensity values, enabling leaf moisture or chlorophyll estimation. |

| Retro-Reflective Fiducial Markers | Spherical or planar targets that reflect light directly back to source. | Controlled & Field. Provide high-contrast, stable points for precise multi-scan registration and tracking. |

| Portable Black Backdrop | Matte black fabric with low NIR reflectance (<5%) on a foldable frame. | Field. Creates controlled background for individual plant scanning, simplifying segmentation. |

| GNSS RTK System | High-precision global navigation satellite system with real-time kinematic correction (e.g., Trimble R12). | Field. Provides geospatial reference for GCPs and scan positions, enabling geotagging and multi-season alignment. |

| Dimensional Validation Kit | Set of gauge blocks or 3D-printed geometric shapes with certified dimensions. | Controlled & Field. Used in Protocol 2.2 for in-situ verification of scanning metric accuracy. |

| Vibration-Damped Tripod | Heavy-duty tripod with a center hook for adding weight (e.g., sandbag). | Field. Provides stability in windy conditions, minimizing point cloud jitter. |

Within the context of LiDAR for 3D plant architecture measurement in plant phenotyping and drug development research, high-quality data acquisition is foundational. These protocols detail standardized methodologies to ensure point clouds are of sufficient quality, accuracy, and repeatability for quantitative trait extraction, essential for tracking phenotypic changes in response to genetic modifications or therapeutic compounds.

Protocol: Pre-Acquisition Site & System Calibration

Objective: Establish a controlled environment and verified sensor state to minimize systematic error.

Detailed Methodology:

- Control Target Placement: Prior to scanning, place a minimum of five (5) high-contrast, spherical targets (e.g., 14.5cm diameter) on stable tripods within and around the plant canopy perimeter. Their known, precisely surveyed 3D coordinates (via Total Station or high-precision GNSS) will form the basis for point cloud registration and accuracy assessment.

- System Warm-up & Verification: Power on the terrestrial laser scanner (TLS) or scanning system for a minimum of 15 minutes to stabilize internal electronics. Execute the manufacturer's built-in system self-check and calibration routine (e.g., inclination sensor calibration, vertical/horizontal circle correction).

- Environmental Logging: Record ambient conditions: temperature (°C), relative humidity (%), and light intensity (lux). Note any wind speed (m/s) that may induce plant movement.

Protocol: Multi-Scan Registration & Co-Registration Workflow

Objective: Merge multiple scans from different positions into a single, coherent dataset and align repeat scans for temporal comparison.

Detailed Methodology:

- Scanning Network Design: Plan scanning positions to ensure >30% overlap between adjacent scans and line-of-sight to a minimum of three (3) common control targets from each position.

- Data Capture: At each station, acquire a high-resolution scan (e.g., 1mm @ 10m point spacing). Ensure scan settings (e.g., pulse repetition frequency, quality setting) are identical for all scans in a session.

- Target-Based Registration: In processing software (e.g., CloudCompare, Cyclone), import all scans. Using the sphere-to-sphere or target-to-target method, register each scan to the global coordinate system defined by the surveyed control points. Accept registration errors with a Root Mean Square (RMS) ≤ 2mm.

- Co-Registration for Time Series: To compare plant architecture over time (e.g., Day 0, Day 7, Day 14), use a permanent, stable subset of control targets or ground features as a unchanging reference frame. Align all temporal datasets to this common frame.

Title: Multi-Scan Registration Workflow for Coherent Point Clouds

Protocol: Quantitative Accuracy & Precision Assessment

Objective: Quantify the spatial error and repeatability of the acquired point cloud data.

Detailed Methodology:

- Accuracy (Trueness) Measurement: After registration, extract the scanned centroids of the control targets. Calculate the Euclidean distance between each scanned centroid and its corresponding surveyed coordinate. Report the mean, standard deviation (SD), and maximum of these residuals.

- Precision (Repeatability) Test: Conduct three (3) consecutive scans of the same static scene from the same position without moving the scanner or targets. Register all three scans to the same control frame. For a stable planar surface (e.g., a wall), fit a plane in each cloud and calculate the standard deviation of point distances to the mean plane fit across all replicates.

Table 1: Example Accuracy Assessment from Control Targets

| Target ID | Surveyed X (m) | Surveyed Y (m) | Surveyed Z (m) | Scanned X (m) | Scanned Y (m) | Scanned Z (m) | Residual (mm) |

|---|---|---|---|---|---|---|---|

| T1 | 100.000 | 100.000 | 1.500 | 100.001 | 99.999 | 1.501 | 1.6 |

| T2 | 105.000 | 100.000 | 1.500 | 105.001 | 100.001 | 1.498 | 2.2 |

| T3 | 102.500 | 103.000 | 1.500 | 102.499 | 103.002 | 1.502 | 2.3 |

| Stats | |||||||

| Mean | - | - | - | - | - | - | 1.9 |

| Std. Dev. | - | - | - | - | - | - | 0.8 |

| Max | - | - | - | - | - | - | 2.3 |

Protocol: Canopy-Optimized Scanning for Plant Architecture

Objective: Maximize coverage and detail of complex, occluded plant structures.

Detailed Methodology:

- Multi-Angle Strategy: Perform scans from at least two (2) heights (e.g., ground level and an elevated platform) and four (4) cardinal directions around the plant of interest.

- Occlusion Minimization: For dense canopies, implement "under-canopy" scanning by placing the scanner low and aiming upwards through gaps in the foliage.

- Resolution Settings: Use the highest angular resolution supported by the scanner for fine structural detail (e.g., twigs, petioles). For a full canopy capture, balance with scan speed to minimize motion artifacts from wind.

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Solutions for LiDAR Plant Phenotyping

| Item | Function & Specification |

|---|---|

| High-Precision Spherical Targets | Artificial, invariant reference points with known radiometric properties for automatic detection and high-accuracy registration. Typically 100-150mm diameter. |

| Stable Surveying Tripods & Mounts | Provide a vibration-free, stable platform for both scanner and control targets, crucial for repeatability. |

| Total Station (e.g., Leica TS16) | Provides millimeter-accuracy 3D coordinates for control targets, establishing the ground truth reference frame. |

| Portable Environmental Logger | Records micro-climatic conditions (T, RH, light, wind) during scanning to annotate datasets and identify potential error sources (e.g., wind-induced blur). |

| Standardized Calibration Field Artifact | A physical object (e.g., step gauge, flat plane) with certified dimensions used for periodic, independent verification of scanner scale and distance accuracy. |

| Co-Registration Software Module (e.g., CloudCompare 'M3C2' plugin) | Enables precise alignment and direct comparison of point clouds from different time points for change detection and growth measurement. |

This document outlines a standardized protocol for processing 3D LiDAR point cloud data within the context of a thesis focused on high-throughput 3D plant architecture measurement for phenotyping research. Accurate quantification of morphological traits—such as leaf area, stem diameter, and canopy volume—is critical for understanding plant growth, health, and response to therapeutic compounds in agricultural and drug development settings.

Application Notes: Core Workflow & Significance

The sequential workflow of Noise Filtering, Registration, and Segmentation is fundamental to transforming raw, unstructured LiDAR scans into quantifiable, biologically relevant parameters. Noise introduces measurement error, misregistration invalidates multi-temporal comparisons, and without segmentation, individual plant organs cannot be isolated for analysis. This pipeline enables the non-destructive, volumetric measurement of architectural traits at scale, supporting research in plant physiology and the development of bioactive compounds.

Experimental Protocols

Protocol: Noise Filtering for Canopy Point Clouds

Objective: Remove outlier points from raw LiDAR data (e.g., from dust, sensor artifacts, or flying insects) while preserving fine plant structures. Materials: Raw *.las or *.ply point cloud file from terrestrial or mobile LiDAR. Software: CloudCompare (v2.13+), Python with Open3D or PDAL libraries. Procedure:

- Data Import: Load the raw point cloud into the chosen software.

- Statistical Outlier Removal (SOR): a. For each point, compute the mean distance (d_mean) to its k nearest neighbors (e.g., k=20). b. Compute the global mean (μ) and standard deviation (σ) of all d_mean. c. Identify points where d_mean > μ + n × σ. A typical threshold is n=1.5. d. Remove identified outliers.

- Radius-Based Outlier Removal (Optional): a. Apply if sparse noise persists. Count neighbors within a specified radius r (e.g., r=2 mm). b. Remove points with neighbor counts below a threshold (e.g., < 3 points).

- Validation: Visually inspect filtered cloud; ensure thin stems and leaf edges are retained. Calculate percentage of points removed (see Table 1).

Protocol: Multi-View Registration for Complete Plant Reconstruction

Objective: Align multiple, overlapping point clouds from different scanner positions into a single, global coordinate system. Materials: Multiple noise-filtered point clouds of the same plant from different viewpoints. Software: ICP-based tools in CloudCompare, Open3D, or the point cloud library (PCL). Procedure:

- Manual Pre-Alignment (Coarse Registration): Select one cloud as reference. Manually translate/rotate a second cloud to approximately overlap with the reference.

- Automated Fine Registration (Iterative Closest Point - ICP): a. For each point in the source cloud, find the closest point in the target/reference cloud. b. Estimate the optimal rigid transformation (rotation R, translation T) that minimizes the mean squared error between correspondences. c. Apply the transformation to the source cloud. d. Iterate steps a-c until convergence (change in error < 1e-6) or max iterations (e.g., 50) is reached.

- Global Registration (For >2 Scans): Use a strategy (e.g., pairwise sequential, or global graph-based) to register all scans to a common frame.

- Output & Validation: Export the merged point cloud. Quantify registration error as the final Root Mean Square (RMS) point-to-point distance (see Table 1).

Protocol: Segmentation of Plant Organs

Objective: Classify points in a registered point cloud into categories: stem, leaf, fruit, and soil. Materials: A single, registered, and noise-filtered point cloud of a plant. Software: Python with scikit-learn, PDAL, or custom scripts implementing region-growing or machine learning. Procedure (Region-Growing RGB-Based):

- Color-Space Conversion: If using RGB camera-integrated LiDAR, convert point colors from RGB to CIELAB or HSV for better color distinction.

- Curvature/Normal Estimation: Compute surface normals for each point using local plane fitting.

- Seed Point Selection: Manually or automatically select seed points known to belong to a specific organ (e.g., a point on a green leaf).

- Region Growing: For each seed point, examine its neighbors. If a neighbor's color (e.g., a channel in CIELAB for green/red) and normal vector are within a defined tolerance of the seed's properties, add it to the region. Repeat iteratively until no new points are added.

- Classification: Assign a unique label to all points in the grown region.

- Validation: Manually verify segmentation accuracy on a subset. Calculate metrics such as precision, recall, and Intersection-over-Union (IoU) for each organ class against a manually labeled ground truth.

Table 1: Quantitative Metrics from a Representative Point Cloud Processing Pipeline (Tomato Plant)

| Processing Stage | Key Parameter | Value | Impact on Final Model |

|---|---|---|---|

| Noise Filtering | Points Removed (SOR) | 1.8% of total | Reduced spurious data, minimal feature loss. |

| Final Point Count | 4.12 million | Cleaned input for registration. | |

| Registration | Pairwise ICP RMS Error | 0.43 mm | High alignment accuracy. |

| Number of Scans Merged | 8 | Complete 360° plant model. | |

| Segmentation | Leaf IoU (vs. Ground Truth) | 0.89 | High segmentation fidelity. |

| Stem IoU (vs. Ground Truth) | 0.78 | Moderate accuracy; challenges in dense nodes. | |

| Derived Trait | Total Leaf Area (from segmented leaves) | 1.24 m² | Key architectural phenotype. |

Visualized Workflows

Title: Core LiDAR Point Cloud Processing Workflow for Plant Phenotyping

Title: Organ Segmentation via Region-Growing Algorithm

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Solutions & Materials for LiDAR Plant Phenotyping

| Item | Function/Description | Example/Specification |

|---|---|---|

| Terrestrial Laser Scanner (TLS) | High-resolution, static 3D data acquisition. | FARO Focus S-series, Leica BLK360. |

| Mobile Scanning Platform | Allows high-throughput scanning of multiple plants in a row. | Custom cart with mounted LiDAR (e.g., Velodyne VLP-16). |

| Calibration Target/Sphere | Used for scanner calibration and as reference points in multi-view registration. | Spheres of known diameter (e.g., 100 mm). |

| Spectral Reference Panel | For calibrating RGB values from integrated cameras; improves segmentation. | Standardized white/grey balance card. |

| Processing Software Suite | Open-source platforms for executing the core protocols. | CloudCompare, Open3D, PDAL, Python (scikit-learn). |

| Ground Truth Annotation Tool | Software for manually labeling point clouds to validate segmentation algorithms. | CloudCompare, LabelCloud, or custom tools. |

| High-Performance Workstation | Necessary for processing large (billion-point) datasets. | CPU: 16+ cores, RAM: 64+ GB, GPU: NVIDIA RTX series. |

Introduction Within the context of LiDAR for 3D plant architecture measurement research, the extraction of quantitative phenotypic traits is fundamental. This document provides application notes and detailed protocols for deriving three core agronomic parameters—biomass, canopy structure, and growth dynamics—from 3D LiDAR point clouds. The methodologies are designed for high-throughput plant phenotyping in controlled and field environments, serving research in crop science, genetics, and pharmaceutical development of plant-derived compounds.

1. Application Notes & Quantitative Data Summary

Table 1: Core Trait Extraction Algorithms and Performance Metrics

| Trait Category | Primary Algorithm(s) | Key Derived Metrics | Typical Accuracy (R²) | Common LiDAR Platform | Computational Complexity |

|---|---|---|---|---|---|

| Biomass | Volume-based Voxelization, Convex Hull, Alpha Shapes | Fresh/Dry Weight Prediction, Plant Volume (m³) | 0.75 – 0.92 (vs. Destructive) | Terrestrial Laser Scanner (TLS), Handheld | Medium-High |

| Canopy Structure | Canopy Height Model (CHM), Leaf Area Density (LAD) profiles, Voxel-based Occupancy | Canopy Height (m), Plant Area Index (PAI), Gap Fraction, LAIe (effective) | 0.80 – 0.95 (vs. Hemispherical Photography) | UAV-LiDAR, TLS | Low-Medium |

| Growth Parameters | Temporal Point Cloud Registration (ICP, Feature-based), Voxel-to-Voxel Comparison | Height Increment (cm/day), Volume Growth Rate (%/day), Canopy Expansion Rate | >0.90 for relative change | Stationary Scanners, UAV-LiDAR (time-series) | High |

Table 2: Comparative Performance of Segmentation Methods for Organ-Level Extraction

| Segmentation Method | Principle | Suitability | Accuracy (IoU Score) | Speed |

|---|---|---|---|---|

| Region Growing (Curvature-based) | Groups points with similar normal vectors/curvature | Leaf vs. stem separation in simple architectures | 0.65 – 0.80 | Fast |

| DBSCAN Clustering | Density-based spatial clustering | Individual plant separation in dense plots | 0.70 – 0.85 | Medium |

| Deep Learning (e.g., PointNet++) | Learned semantic features from point sets | Complex organ segmentation (leaf, stem, fruit) | 0.80 – 0.95 | Slow (Training), Medium (Inference) |

| Color- or Intensity-Based | Thresholding on reflectance values | Distinguishing photosynthetic vs. non-photosynthetic tissue | Varies widely | Very Fast |

2. Experimental Protocols

Protocol 2.1: Destructive Biomass Correlation for Calibration Objective: To establish a regression model for predicting dry biomass from LiDAR-derived plant volume. Materials: TLS or handheld LiDAR, precision scale, drying oven, sample plants. Procedure:

- Pre-Scan Setup: Place potted plant on a rotary stage. Attach reflective markers for optional co-registration.

- 3D Scanning: Acquire high-resolution (>10 pts/cm²) point cloud from multiple viewpoints to minimize occlusion.

- Trait Extraction (Pre-Harvest): Process point cloud. a. Ground Filtering: Apply Cloth Simulation Filter (CSF) or simple height threshold. b. Voxelization: Convert points to 3D voxel grid (1-5 mm resolution). Calculate total plant volume (V_est) as number of occupied voxels * voxel volume. c. Convex Hull: Compute the smallest convex polyhedron containing all plant points. Calculate its volume as an alternative metric.

- Destructive Sampling: Immediately after scanning, harvest plant. Measure fresh weight (FW). Dry in oven at 70°C for 72 hours until constant weight. Record dry weight (DW).

- Model Calibration: Perform linear or power-law regression (e.g., DW = a * V_est^b) using data from a representative sample set (n>=30).

Protocol 2.2: Canopy Height Model (CHM) and Gap Fraction Derivation Objective: To derive canopy structural parameters from UAV-based LiDAR for plot-level analysis. Materials: UAV equipped with LiDAR (e.g., RIEGL VUX-120), GPS base station, processing software (e.g., LAStools, CloudCompare). Procedure:

- Data Acquisition: Fly UAV over field plot with >60% side overlap, ensuring consistent altitude and speed. Record trajectory with RTK/PPK GPS.

- Point Cloud Generation: Post-process raw scans and trajectory to generate a georeferenced, classified point cloud (.las format). Classify ground points.

- DEM/DSM Creation: a. Create a Digital Elevation Model (DEM) by interpolating classified ground points. b. Create a Digital Surface Model (DSM) by interpolating the highest non-ground returns within each raster cell (e.g., 5x5 cm).

- CHM Calculation: Generate CHM raster: CHM = DSM - DEM.

- Canopy Metrics Extraction: a. Mean/Max Height: Calculate from CHM. b. Gap Fraction: Binarize CHM (height > threshold = canopy). Gap Fraction = (1 - (canopy pixels / total pixels)).

- LAD Profile: Slice the normalized point cloud into horizontal layers (e.g., 5 cm thick). For each layer, calculate LAD using a voxel-based method (e.g., calculating contact frequency).

Protocol 2.3: Temporal Growth Tracking Using Iterative Closest Point (ICP) Objective: To quantify growth rates by aligning sequential point clouds of the same plant. Materials: Fixed terrestrial LiDAR scanner (e.g., Faro Focus), permanent mounting fixtures. Procedure:

- Time-Series Scanning: At consistent intervals (e.g., daily), scan the same plant from the identical scanner position and settings.

- Preprocessing: For each time point (T1, T2...Tn), isolate the plant point cloud via manual cropping or automatic bounding box.

- Point Cloud Registration: a. Use the T1 cloud as the reference. b. For cloud Tn, apply a coarse manual or feature-based alignment followed by fine alignment using the ICP algorithm to minimize point-to-point distances. c. Verify alignment accuracy using cloud-to-cloud distance metrics.

- Change Detection: a. Volumetric Difference: Calculate voxelized volumes for Tn and Tn-1 after alignment. Compute absolute and relative growth rates. b. Height Difference: Directly subtract the highest point (or 95th percentile height) between aligned clouds.

3. Mandatory Visualization

Diagram 1: LiDAR-Based Trait Extraction Workflow

Diagram 2: Biomass Calibration & Validation Pathway

4. The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials & Software for LiDAR Plant Phenotyping

| Item Name / Solution | Category | Function & Application Note |

|---|---|---|

| Terrestrial Laser Scanner (e.g., FARO Focus) | Hardware | High-accuracy, stationary scanning for detailed architecture and temporal growth studies. Essential for protocol 2.1 and 2.3. |

| UAV-LiDAR Payload (e.g., RIEGL miniVUX) | Hardware | Enables rapid, plot-level canopy structure assessment. Critical for protocol 2.2 and field-scale applications. |

| Retro-Reflective Markers | Calibration Tool | Used as ground control points (GCPs) or for multi-view scan co-registration. Improves alignment accuracy. |

| LAStools / PDAL Suite | Software | Industry-standard for efficient processing, filtering, and classifying large LiDAR point clouds. |

| CloudCompare (with CANUPO plugin) | Software | Open-source platform for 3D point cloud visualization, comparison, and basic segmentation. |

| Python (Open3D, PyVista, scikit-learn) | Software | Custom algorithm development for voxelization, segmentation, and machine learning-based trait extraction. |

| R (lidR package) | Software | Specialized environment for forestry and ecological LiDAR analysis, excellent for CHM and LAD profiles. |

| Deep Learning Framework (e.g., PyTorch3D) | Software | Enables implementation of PointNet++ and other architectures for advanced organ-level segmentation. |

Within the broader thesis on LiDAR for 3D plant architecture measurement, this application note details protocols for integrating LiDAR data with hyperspectral imaging (HSI) and chlorophyll fluorescence imaging (CFI). This multimodal fusion creates a comprehensive phenotype map, linking structural traits from LiDAR with physiological and biochemical states from spectral data, crucial for plant science and drug development from natural products.

Table 1: Comparison of Multimodal Imaging Modalities for Plant Phenotyping

| Modality | Primary Measured Variable | Spatial Resolution | Temporal Resolution | Key Derived Plant Traits | Typical Platform |

|---|---|---|---|---|---|

| LiDAR | Time-of-flight / Point Cloud | 0.1 - 5 mm | Low-Medium (sec-min) | Canopy height, volume, leaf area index, stem diameter, 3D architecture | Terrestrial, UAV, Ground Robot |

| Hyperspectral Imaging | Spectral Reflectance (400-2500 nm) | 0.5 - 10 mm | Medium (sec) | Chlorophyll content, water status, nitrogen content, carotenoids, stress indicators | UAV, Ground-based gantry, Proximal sensor |

| Fluorescence Imaging | Chlorophyll Fluorescence Yield | 0.5 - 5 mm | High (ms-sec) | Photosynthetic efficiency, photochemical quenching, non-photochemical quenching, heat dissipation | Dark-adapted chamber, Portable systems |

Table 2: Common Data Fusion Outcomes and Applications

| Fusion Type | Data Combined | Output | Application in Research/Drug Development |

|---|---|---|---|

| Spatial Co-registration | LiDAR (3D) + HSI (2D spectral cube) | 3D Voxelized Spectral Model | Mapping pigment distribution on 3D canopy; identifying bioactive compound hotspots. |

| Feature-Level Fusion | LiDAR structural metrics + HSI Vegetation Indices | Multivariate Regression Models | Predicting photosynthetic rate from structure + chlorophyll content; biomass estimation. |

| Model-Based Fusion | LiDAR-derived Plant Architecture + CFI Kinetic Curves | 3D Light Distribution & Photosynthesis Model | Simulating light interception and its effect on photosynthetic efficiency across genotypes. |

Experimental Protocols

Protocol 3.1: Co-registration of Terrestrial LiDAR and Hyperspectral Imaging for 3D Chemical Mapping

Objective: To generate a spatially accurate 3D model where each point contains XYZ coordinates and a full spectral signature.

Materials: See "Scientist's Toolkit" (Section 5).

Procedure:

- Site & Target Setup: In a controlled growth chamber or field plot, place at least four high-contrast fiducial markers (e.g., checkerboard targets) around the plant of interest. Ensure markers are visible to both sensors.

- LiDAR Scanning: Position the terrestrial LiDAR scanner on a stable tripod. Perform a 360-degree scan at high resolution (e.g., 1mm at 10m). Ensure the scan captures the entire plant and all fiducial markers. Export data as a registered point cloud (

.lasor.ply). - Hyperspectral Image Acquisition: Mount the HSI camera on a tripod or linear rail. Position it to capture the same plant and fiducial markers from a similar angle as the LiDAR. Acquire the hyperspectral cube in reflectance mode, using a white reference panel for calibration. Save data as

.dator.hdrwith spatial metadata. - Data Pre-processing:

- LiDAR: Use software (e.g., CloudCompare) to clean noise and segment the plant point cloud from background.

- HSI: Apply radiometric calibration, spectral smoothing (Savitzky-Golay), and compute standard vegetation indices (e.g., NDVI, PRI).

- Co-registration:

- Use the centroids of the fiducial markers as ground control points.

- In a point cloud processing software (e.g., MATLAB with Computer Vision Toolbox, Open3D), perform an Iterative Closest Point (ICP) or feature-based registration. The LiDAR point cloud is the fixed reference.

- Project the 2D HSI pixels onto the 3D LiDAR point cloud using the calculated transformation matrix, assigning the spectral values to the nearest 3D points.

- Analysis: Analyze the fused dataset to correlate structural parameters (e.g., leaf angle from LiDAR) with spectral indices (e.g., chlorophyll content) on a per-organ basis.

Protocol 3.2: Synchronized LiDAR and Pulse-Amplitude-Modulated (PAM) Fluorescence Imaging

Objective: To link canopy architecture with photosynthetic performance under dynamic light conditions.

Materials: See "Scientist's Toolkit" (Section 5).

Procedure:

- System Synchronization: Connect the LiDAR system and PAM fluorometer imaging system to a central trigger. Use a software script (e.g., in Python) to send simultaneous start commands.

- Dark Adaptation: Place the plant in a dark-adaptation chamber for 30 minutes prior to measurement.

- Baseline Acquisition: Trigger the LiDAR for a rapid structural scan. Simultaneously, trigger the PAM system to capture a minimal fluorescence (

Fo) image in the dark. - Light Stress Application: Expose the plant to a saturating actinic light pulse. Simultaneously, trigger a second LiDAR scan (if monitoring movement) and the PAM system to capture maximum fluorescence (

Fm). - Kinetic Series: Under constant actinic light, run a standard fluorescence quenching protocol (e.g., light curves). Acquire LiDAR scans at key intervals (e.g., every 2 minutes) to capture any architectural changes (wilting, leaf movement).

- Data Fusion:

- Calculate images of

Fv/Fm(max quantum yield) andΦPSII(effective yield) from fluorescence data. - Register the 2D fluorescence images to the 3D LiDAR point cloud using a projective transformation based on shared reference points visible in both modalities.

- Calculate images of

- Analysis: Map

ΦPSIIonto the 3D plant model. Analyze correlations between light exposure (simulated from LiDAR-derived sun angle and occlusion) and photosynthetic efficiency across different leaf layers.

Visualization Diagrams

Title: Multimodal Plant Phenotyping Workflow

Title: LiDAR-Fluorescence Fusion Logic

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions & Materials for Multimodal Fusion Experiments

| Item Name / Category | Function / Purpose | Example Specifications / Notes |

|---|---|---|

| Terrestrial LiDAR Scanner | High-accuracy 3D point cloud generation of plant architecture. | Example: Phase-based (e.g., Faro Focus) or Time-of-flight. Resolution: < 2mm @ 10m. Essential for structural benchmarks. |

| Hyperspectral Imaging Camera | Captures spectral reflectance data across hundreds of narrow bands. | Example: Headwall Nano-Hyperspec (400-1000nm) or SWIR (900-2500nm). Used for biochemical trait mapping. |

| PAM Fluorometer Imaging System | Measures chlorophyll fluorescence kinetics for photosynthetic analysis. | Example: Walz Imaging-PAM or FluorCam. Provides images of Fv/Fm, NPQ, and other quenching parameters. |

| Fiducial Markers | Ground control points for accurate spatial co-registration of datasets. | High-contrast checkerboard or retroreflective targets. Must be geometrically stable and visible in all sensor spectra. |

| Spectralon White Reference Panel | Calibration standard for hyperspectral and fluorescence imaging. | Provides >99% diffuse reflectance for converting raw data to absolute reflectance. |

| Dark-Adaptation Clips/Chamber | Ensures photosynthetic reaction centers are fully open for accurate Fo measurement. | Opaque clips for leaves or a light-tight chamber for whole plants. |

| Programmable Actinic Light Source | Provides controlled, uniform light stress for fluorescence protocols. | LED arrays with adjustable intensity and spectrum. Synchronized via TTL trigger. |

| Co-registration Software | Performs spatial alignment of 2D images to 3D point clouds. | CloudCompare (open-source), MATLAB Computer Vision Toolbox, or custom Python scripts using Open3D/PDAL. |

| High-Performance Computing Workstation | Processes and fuses large multimodal datasets (point clouds, hypercubes, video). | Requires high RAM (>64 GB), multi-core CPU, and powerful GPU for efficient processing. |

Overcoming LiDAR Challenges in Plant Phenotyping: Noise, Occlusion, and Data Complexity